Best AI Tools for Academic Writing 2026: An ECR Guide

For early-career researchers, academic writing is rarely just a matter of drafting faster. The harder task is producing work that can withstand reviewer scrutiny while you are also managing revisions, literature, and competing deadlines. That publication-stage pressure is what shapes this 2026 update to our 2025 guide to the best AI tools for academic writing.

The OECD’s 2026 work on generative AI draws a useful distinction between shortcut-style uses that offload thinking and slower uses that support reasoning and revision. In academic writing, that distinction is practical. The most useful tools are not the ones that produce the most text. They are the ones that help you clarify structure, compare evidence, refine technical language, and prepare a manuscript for submission without weakening your control over the argument.

This guide focuses on the AI tools most useful to early-career researchers writing for publication, revision, and review. Instead of trying to cover every app in the academic AI market, it selects the tools that address common high-stakes writing problems well, from literature synthesis and methods reporting to technical drafting and pre-submission feedback.

Quick Comparison: AI Tools for Early-Career Research Writing

Tool | Core ECR Workflow | Key Function |

Manages federal RFP narratives and maintains persistent institutional memory to ensure compliance. | ||

Visually maps literature networks to help write and prove a distinct "research gap." | ||

Checks structure, argument flow, and evidence use before supervisor, co-author, or journal review. | ||

Automates compliance checks for reporting frameworks (ARRIVE, MDAR) and validates RRIDs. | ||

Extracts and synthesizes source-grounded findings from peer-reviewed databases. | ||

Technical Formatting | Corrects highly specialized STEM phrasing and formats manuscripts to strict AMA/APA styles. | |

Collaborative Drafting | Manages multi-author, complex mathematical and scientific manuscripts via LaTeX with AI integrations. | |

Bilingual Precision | Translates complex technical thoughts into publishable academic English with enterprise-grade security. |

The Ethical Framework: Data Privacy and Publisher AI Policies in 2026

Early-career researchers operate in a publishing environment that demands clear authorship, transparent disclosure, and strict data handling. Before integrating any AI tool into an article, grant, or review workflow, you must verify two things: the policy of your target publisher and the data terms of the tool itself.

Publisher Rules That Shape AI Use in 2026

Across major publisher policies and publication-ethics guidance, three rules now shape how AI can be used in academic writing.

Strict Prohibition of AI Authorship: Artificial intelligence models, including Large Language Models (LLMs), cannot be listed as authors or co-authors under any circumstances. Elsevier and Springer Nature both state that authorship requires human accountability for accuracy, originality, integrity, and final approval. That responsibility remains with the author.

Mandatory Disclosure and Transparency: Any use of generative AI tools in the preparation of a manuscript must be explicitly declared upon submission. Elsevier requires an AI declaration statement for AI use in manuscript preparation and asks authors to record the tool name, purpose, and degree of oversight. Springer Nature likewise requires transparent declaration of generative AI use. Basic grammar, spelling, and punctuation support are generally treated differently from drafting or analytical assistance.

Restrictions on Generative Imagery: To combat the proliferation of fabricated data, publishers enforce strict bans on the use of generative AI to create or alter scientific images, figures, or artwork within submitted manuscripts. The only permitted exception is if the generation or alteration of images using AI is explicitly the subject of the research methodology itself, which must be rigorously documented. For example, Elsevier does not permit generative AI or AI-assisted tools to create or alter images in submitted manuscripts, except where AI use forms part of the research design or methods and is documented in a reproducible way. Springer Nature takes a similar position.

COPE adds a second layer of relevance here. It is not a publisher, but its peer-review guidance remains highly influential across scholarly publishing. Its guidelines stress confidentiality, responsible handling of unpublished material, and editorial permission before involving other people in a review. That matters for AI use because many writing and review tools process user inputs externally.

Data Privacy, Public LLMs, and Zero Data Retention

Beyond publisher compliance, ECRs must actively manage data privacy risks. Uploading unpublished methodologies, sensitive patient data, or novel grant proposals into public LLMs constitutes a critical breach of confidentiality. Because public models ingest user inputs to train future iterations, a researcher's proprietary findings could surface in a third-party query, compromising the research's novelty and threatening future patent claims.

Consumer and enterprise products operate under different data terms. OpenAI states that ChatGPT Business, ChatGPT Enterprise, ChatGPT Edu, and the API do not train on customer data by default. For researchers working with unpublished manuscripts, reviewer comments, grant materials, or sensitive participant information, that distinction matters. A tool that is acceptable for low-stakes drafting may not be appropriate for publication-stage writing if its data terms are unclear.

Zero Data Retention is a contractual standard, not a generic product label. Anthropic’s privacy documentation states that some approved enterprise API customers may have zero-retention arrangements, but that these arrangements are separate from the default retention model and apply only to eligible API-based products unless otherwise agreed. In practice, that means researchers should verify whether a provider’s retention claims apply to the specific product and plan they are considering, not assume that “private” and “zero retention” mean the same thing.

For publication-stage writing, privacy verification belongs in tool selection. Before you upload unpublished text, check whether the provider trains on your inputs, whether retention controls exist, whether enterprise terms are available, and whether confidential material can be processed under the relevant agreement. For early-career researchers, that review belongs alongside feature comparison rather than after the workflow has already been built.

Workflow 1: Grant Proposals and Funding Narratives

Grant proposals fall within academic writing, but they operate under a different set of constraints than journal articles. A strong proposal has to establish a fundable research gap, align the aims with the sponsor’s priorities, and maintain consistency across the narrative, methods, budget justification, timeline, and institutional context. For early-career researchers, that work usually unfolds under short deadlines and repeated revision cycles.

In grant writing, AI is most useful when it supports structure, literature positioning, and cross-section consistency. The relevant question is whether the proposal stays aligned from the opening rationale to the final deliverables.

Grantable: Best for RFP-Aligned Narrative Drafting

Best for: Reusing verified institutional language across complex funding applications.

Federal and institutional calls often require the same core information to appear in multiple places: specific aims, facilities descriptions, investigator biographies, compliance statements, and budget justifications. That creates a writing problem as much as an administrative one. A proposal can lose force when these sections drift in emphasis, terminology, or level of specificity.

Grantable is strongest when the bottleneck is narrative management. It allows for the controlled reuse of previously verified material, ensuring a new proposal stays strictly consistent with the current call’s structure and requirements. This is particularly useful when adapting earlier language to a new RFP without losing cross-section coherence.

Use it when:

the proposal includes repeated institutional or administrative language

the sponsor requires strict formatting and section-level consistency

you are revising an application across several internal and external versions

Watch for:

A consistent proposal still needs a sharp rationale. A tool in this category can help maintain alignment, but it does not replace the intellectual work of defining significance, articulating the gap, or writing aims that read as conceptually focused rather than administratively complete. After drafting, always seek grant proposal feedback.

Litmaps: Best for Research Gap Positioning in Grant Introductions

Best for: Clarifying the literature landscape behind a grant’s problem statement and rationale.

Grant introductions succeed when they show where the field stands, what remains unresolved, and why the proposed project deserves funding now. That requires more than a linear list of papers. It requires a usable picture of the conversation surrounding the topic.

Litmaps is most useful when you need to trace clusters of papers, follow citation paths, and see how a topic has developed over time. In grant writing, that helps you write a more precise account of the existing literature and a more defensible statement of novelty. It also makes it easier to distinguish foundational work from peripheral strands that do not need equal space in the proposal.

Use it when:

the literature review feels broad or descriptive

the proposal needs a clearer research gap

the introduction covers too many adjacent strands without clear prioritization

Watch for:

The map is not the final output. Its value lies in helping you turn a broad field into a focused narrative that explains what is already established, where your research gap sits, and why your proposed study matters now.

Workflow 2: Pre-Submission Review and Methods Reporting

Pre-submission review sits at the point where writing quality, reporting quality, and publication readiness meet. A draft can contain a strong research question and a defensible contribution while still weakening its own case through structural drift, unclear claims, thin interpretation, or incomplete methods reporting. For early-career researchers, these problems matter because they create avoidable revision rounds, weaken reviewer confidence, and slow the path from draft to submission. For a clearer distinction between internal review and journal review, see What Authors Need to Know About Pre-Submission Review vs Peer Review.

thesify: Best for Structural Review Before Submission

Best for: checking structure, argument flow, evidence use, and section-level alignment before supervisor, co-author, or journal review.

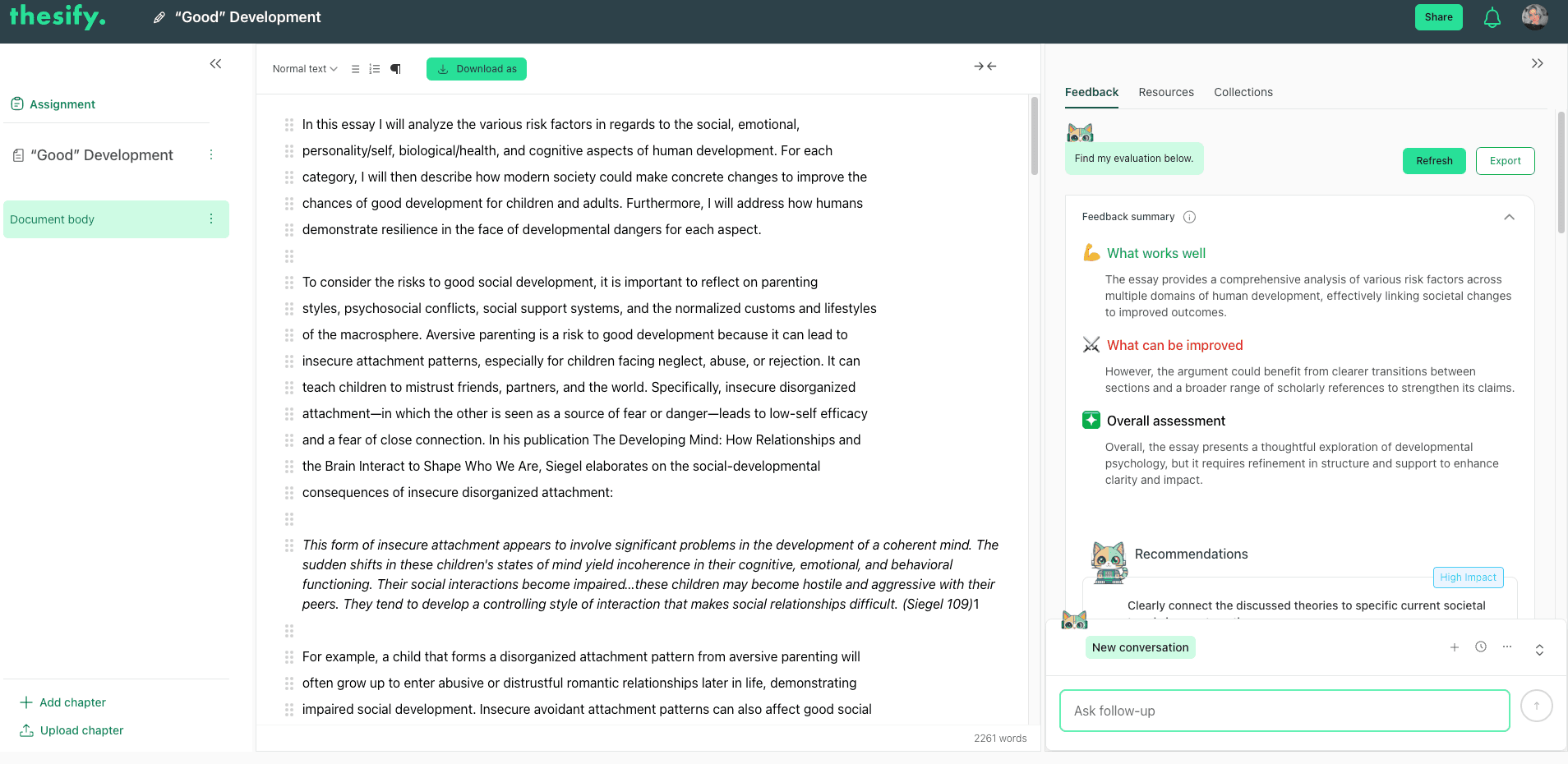

thesify fits this stage when the core problem sits above the sentence level. Its value lies in diagnostic feedback on the architecture of the draft: whether the research aim is easy to locate, whether sections build toward the same claim, whether interpretation keeps pace with the evidence, and whether the conclusion stays within the limits of what the draft actually demonstrates. That makes it a strong fit for article drafts, dissertation chapters, and grant narratives that need a rigorous internal review before external circulation.

thesify’s main feedback view shows how a draft is evaluated through summary feedback, structural comments, and numbered recommendations before submission.

The table below shows the main elements thesify helps authors check before submission:

Draft Element | What to Check | Why It Matters |

Research question or main claim | Is it explicit, bounded, and easy to locate? | Reviewers need to understand the article’s central claim quickly. |

Section logic | Do sections build toward the same argument, or do they drift? | Structural drift weakens coherence and slows comprehension. |

Evidence use | Are major claims supported and interpreted at the right level? | Thin interpretation and unsupported claims invite critical reviewer attention. |

Scope and conclusion | Does the final argument reflect what the draft actually shows? | Overreach creates credibility problems at review. |

Revision priorities | Are the main weaknesses visible before external review? | Earlier diagnosis reduces avoidable revision rounds. |

Following the review, thesify generates a downloadable PDF report titled Theo’s Review. The report turns manuscript-level feedback into a more usable revision agenda by organizing comments into scored categories and concrete recommendations. For early-career researchers working under time pressure, that makes it easier to separate structural problems from later-stage edits and to approach revision in a more deliberate order.

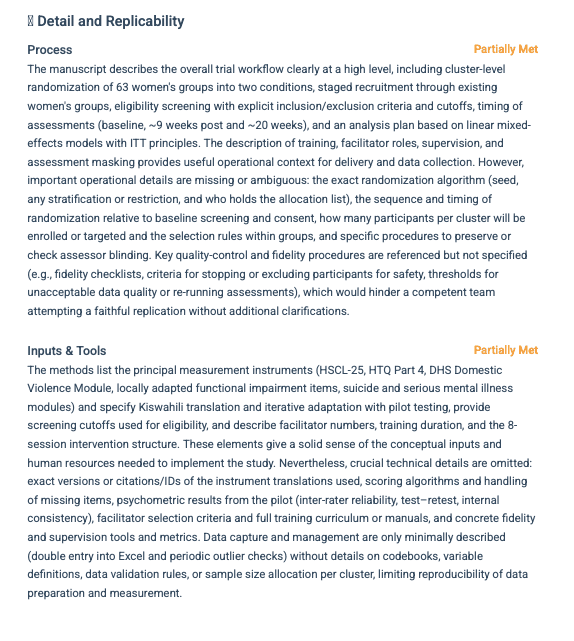

A page from thesify’s downloadable feedback report showing how detail, replicability, and methods reporting can be assessed before submission.

This matters most when a manuscript needs clearer reporting alongside stronger argument structure. The report makes it easier to identify which revisions require attention first.

SciScore: Best for Methods Reporting and Reproducibility Checks

Best for: checking whether a methods section reports the information journals and reviewers expect to see.

Methodological transparency is a writing issue as well as a compliance issue. In empirical fields, the methods section has to specify the design, reporting standards, resource details, and transparency markers that editors and reviewers look for during screening and review. Missing reporting elements can weaken a submission before the argument section is seriously considered.

SciScore is most suitable if your bottleneck sits inside methods reporting. It is designed to check for rigor and reproducibility markers tied to formal reporting standards and to flag omissions that matter at editorial screening. In practice, that makes it a strong fit for researchers preparing manuscripts in fields where reporting completeness carries significant weight.

Use it when:

the target journal applies formal reporting frameworks

the methods section includes specialized resources, models, or protocols

editorial screening is likely to focus heavily on transparency and reporting detail

Watch for:

SciScore helps identify missing reporting elements. It does not resolve questions of theoretical framing, section logic, or the fit between the research question and the design. That is why it works best as part of a narrower pre-submission stack rather than as a standalone writing tool.

Workflow 3: Technical Drafting and Literature Synthesis

Discussion sections, background sections, and literature reviews place different demands on academic writing than grammar correction or surface-level editing. The priority is producing accurate, well-supported text that reflects the field clearly, uses evidence responsibly, and stays strictly within the limits of the verified sources.

The tools in this category are ideal when the writing problem begins before the sentence level. Discussion sections often weaken because the literature has not been compared carefully enough. Background sections become generic when the field has not been narrowed into a usable research gap. Technical drafts become harder to revise when field-specific phrasing is handled by tools built for general prose.

Consensus: Best for Evidence-Based Literature Framing

Best for: answering narrow research questions with source-grounded summaries before you draft or revise a literature-based section.

Consensus fits this workflow when the bottleneck is evidence overload. If you are evaluating the state of a field, checking whether a claim is well supported, or clarifying a narrow point in a discussion section, the problem is often not lack of information but lack of usable synthesis. In that context, Consensus is useful because it helps you move from a broad query to a more focused evidence picture.

Its value for academic writing lies in speed and scope control. Instead of building a background section from scattered search results, you can use it to identify recurring findings, narrow the question, and decide which sources need closer reading. That can improve the quality of a literature review or discussion section, especially when you are trying to avoid descriptive summary and move toward comparative interpretation. For a broader view of discovery and evidence-mapping tools, see Best AI Tools for Academic Research in 2026: A Step-by-Step Workflow Guide.

Use it when:

you need a fast evidence check before drafting a background paragraph

you want to narrow a broad literature question into something reviewable

you are comparing whether the field converges, diverges, or remains unsettled on a point

Watch for:

Consensus helps with literature framing. It does not replace direct reading, source evaluation, or citation verification. Any claim that matters to the argument still needs to be checked in the original paper.

Trinka: Best for Technical Academic English and Journal-Style Editing

Best for: refining technical phrasing, discipline-specific style, and journal-facing language in advanced academic drafts.

Trinka is most useful when the draft is already conceptually sound but still needs tighter technical expression. In STEM, medicine, engineering, and other specialist fields, sentence-level editing has to respect terminology, statistical phrasing, unit conventions, and disciplinary style. A general grammar checker often treats that language as a problem to simplify. For publication-stage writing, that is the wrong intervention.

For early-career researchers, Trinka fits best when the manuscript needs cleaner technical phrasing without losing precision. That includes cases where the prose has become dense, where specialist wording needs refinement, or where the draft needs to move closer to journal style expectations. In this workflow, the value is not generic correction. It is stronger control over technical academic English in a manuscript that is already carrying substantive analytical work.

This makes Trinka best when you need to refine technical language without flattening field-specific phrasing. That is especially relevant in disciplines where publication standards differ sharply across journals and subfields, and where title and abstract wording carries technical as well as stylistic weight.

Use it when:

the manuscript needs technical language support rather than structural diagnosis

a journal-facing draft needs cleaner discipline-specific phrasing

you are editing for style, readability, and consistency after the argument is already in place

Watch for:

Trinka works at the language layer. It does not resolve weak structure, missing evidence, or poor literature framing. It belongs later in the workflow, once the main analytical and organizational decisions have already been made.

Workflow 4: Collaborative Writing and Bilingual Precision

Early-career researchers often write in teams. Multi-author articles, cross-institutional projects, and internationally co-authored manuscripts place pressure on version control, stylistic consistency, and technical precision. In these settings, the writing problem is usually not idea generation. It is coordination. A manuscript can lose clarity when sections are drafted in different voices, when formatting breaks across revisions, or when technical phrasing shifts during translation and editing. These pressures become even sharper in fields where publication standards vary across subfields and journals, which is why differences in academic writing across disciplines remain relevant even in collaborative workflows.

Overleaf: Best for Multi-Author Scientific Writing in LaTeX

Best for: technically demanding manuscripts with multiple authors, equations, complex references, and journal-specific formatting requirements.

For STEM researchers, Overleaf remains one of the strongest tools for collaborative drafting when the manuscript depends on LaTeX. Its main value lies in shared document control. Multiple authors can draft, revise, comment, and compile the same manuscript inside one environment without breaking references, disturbing formatting, or creating conflicting file versions.

For early-career researchers, this matters most when the manuscript is technically demanding and collaboration itself has become part of the writing problem. A shared LaTeX workflow reduces formatting drift across sections, lowers the chance of version-control issues, and makes it easier to move from drafting to submission in journals that already expect LaTeX-based files. When the paper is stable at the formatting level but still needs stronger reasoning before submission, a final round of pre-submission review can help clarify whether the draft’s main claims are actually ready for external review.

Overleaf is especially useful when the manuscript includes equations, technical notation, large tables, or formatting requirements that standard word processors handle poorly. It is also a strong fit when several co-authors need to revise the same paper over time without producing fragmented draft chains. That said, a well-managed collaborative draft can still carry weak interpretation or underdeveloped claims. Before the final submission pass, it is often worth checking whether the paper has clearly articulated the strengths and weaknesses of the academic argument, and whether any section still falls into the kinds of weak analysis patterns that reviewers often flag.

Use it when:

the manuscript depends on LaTeX, equations, or complex technical formatting

several co-authors are revising the same draft

version control has become part of the writing problem

Watch for:

Overleaf improves coordination and formatting control

it does not diagnose structural weaknesses or unsupported claims

it is most useful after the core argument and section plan are already in place

DeepL Pro: Best for Technical Translation and Multilingual Drafting

Best for: Translating research writing into precise academic English while preserving technical meaning.

For bilingual researchers and international postdocs, language support often enters the workflow well before final proofreading. A discussion section, abstract, or methods section may already be conceptually strong but still require careful translation into English that preserves technical vocabulary, field-specific phrasing, and the exact relationship between claim and evidence.

DeepL Pro is ideal if your task is controlled translation rather than broad rewriting. Its strongest fit is with highly technical material where the loss of precision would weaken the manuscript. This makes it particularly relevant in medicine, engineering, and other research-intensive fields where publishable prose depends on exact terminology rather than smoother, general phrasing. This level of exactness is especially critical during research paper title and abstract optimization, where every translated word impacts a manuscript's discoverability and indexing.

In publication-stage writing, this kind of tool belongs late in the workflow. Translation can improve fluency, but it should not replace structural revision, source verification, or disciplinary judgment. A technically accurate sentence in one language can still become less precise when translated into smoother English. Authors therefore need to preserve their academic voice and verify that translated claims still match the original meaning before any final proofreading pass.

Use it when:

You are translating a draft or section into academic English.

The manuscript relies on technical terminology that cannot be simplified casually.

The writing is already structurally sound and simply needs linguistic precision.

Watch for:

Translation support improves fluency, but it does not fix weak arguments or poor structure.

The final wording still requires field-specific review to ensure technical accuracy.

The translated output should always be rigorously checked against the original meaning before submission.

Building a Small Stack You Can Actually Defend

Early-career researchers usually benefit from a small, stable AI stack. A shorter workflow is easier to justify to supervisors, easier to describe in disclosures, and easier to manage across long revision cycles. The critical step is identifying which bottleneck is actually slowing your manuscript down.

1. Overcoming Structural and Argumentative Bottlenecks

If the draft is struggling with structure, claim development, or evidence use, start with thesify. This is the right entry point when the manuscript needs clearer argumentation, stronger section logic, or tighter alignment between the research question and the final conclusion.

2. Streamlining Literature Review and Synthesis

If the main difficulty sits in the literature review, build your stack around synthesis rather than sentence editing. Consensus is ideal when you need a fast, source-grounded view of a narrow research question.

Litmaps is more effective when the field itself still needs to be mapped before you can write a focused rationale. This is also the stage where many writers need to identify and synthesize key research, sharpen their research gap identification, or return to a structured literature review process before revising the prose itself.

3. Managing Technical Formatting and Drafting Environments

If the writing problem is technical, choose tools that support the drafting environment directly. Trinka is the better fit when the manuscript needs discipline-specific language refinement and tighter technical phrasing. Overleaf is the better fit when several authors are drafting in LaTeX and the paper depends on equations, references, and formatting consistency.

In this part of the workflow, evaluating AI proofreading tools for academic writing and understanding the nuanced differences in academic writing across disciplines usually become more important than adding another general-purpose AI tool.

4. Finalizing Submissions with Review-Oriented Tools

If the manuscript is close to submission, keep the stack narrow and move toward review-oriented tools. At that stage, the priority is stronger alignment, cleaner claims, and fewer avoidable weaknesses at the editorial level. That is where understanding what AI tools for academic peer review actually check, maximizing your pre-submission review, and generating a downloadable feedback report for pre-submission success become especially useful.

The Bottom Line: Keep Reasoning With the Author

A defensible AI workflow follows one principle throughout: use AI to diagnose, compare, organize, and check. Keep reading, reasoning, and final judgment with the author. That approach fits current publication standards more closely and produces a clearer account of how the manuscript was developed. For a fuller framework, ensure you adhere to current AI policies in academic publishing and strictly select academic-grade tools.

If your current draft feels structurally weak, or if you want a clearer sense of how a reviewer is likely to experience the manuscript, thesify is the strongest place to begin. It is most useful when the next revision depends on stronger argument structure, better evidence use, and a more rigorous internal review before submission.

Frequently Asked Questions

Which AI tool should you start with if you are revising a major journal article or grant?

For most early-career researchers, the first hurdle is structural. If you are revising a journal article, dissertation chapter, or grant narrative, start with a tool that helps you test argument flow, evidence use, and section-level alignment. That is where thesify is strongest. It is most useful when your draft needs a rigorous internal review before supervisor or co-author feedback.

If your bottleneck sits inside methods reporting rather than overall structure, add a methods-focused tool like SciScore. If the main challenge is managing repeated institutional language and cross-section consistency, Grantable can support that workflow. The principle stays the same: begin with the tool that matches the writing problem most directly.

Are AI tools for academic writing allowed in journal submissions?

Yes, but in limited and clearly defined roles. Major publisher policies converge on three points: AI tools cannot be listed as authors, substantial AI use in manuscript preparation generally needs to be disclosed, and generative AI images are tightly restricted except in specific methodological cases.

For doctoral candidates and postdocs, proactively navigating AI policies for PhD students is critical to avoiding academic misconduct. The practical rule is straightforward: use AI to support revision, checking, and organization, then explicitly disclose that use and retain full responsibility for the manuscript. Always check the target journal’s specific guidelines before initiating your pre-submission review.

Which AI tools help with literature reviews without writing the paper for you?

For literature reviews, the most useful tools are the ones that help you compare sources, identify patterns, and narrow the field before you draft. Consensus is excellent when you need a source-grounded answer to a focused question. Litmaps is better suited if you need to map the field visually and see how clusters of papers relate over time. In both cases, the writing gain comes from stronger synthesis and clearer framing rather than autogenerated prose.

These tools are perfect digital companions to a rigorous step-by-step literature review process. They empower you to actively identify and synthesize key research and sharpen your research gap identification before you begin drafting. They improve your analytical judgment, but they do not replace direct reading or citation verification.

Do you need several AI tools, or just one small stack?

A small stack is usually the better choice. Early-career researchers benefit more from a focused set of tools tied to specific bottlenecks than from a long list of overlapping platforms. The practical test is simple: choose the shortest stack that solves the real problem in front of you.

As highlighted in our breakdown of the ultimate tech stack for PhD students, this usually means selecting one tool for structure and revision (thesify), one for literature synthesis or mapping (Consensus or Litmaps), and one for technical drafting only if the manuscript demands it (Overleaf or Trinka). A tighter stack produces a cleaner workflow, an easier disclosure trail, and ensures every platform you use meets strict academic-grade tool criteria.

Conclusion: Aligning AI Tools with Long-Term Academic Goals

For early-career researchers, academic writing in 2026 requires a workflow that supports judgment, protects authorship, and holds up under review. The strongest AI stack is therefore a selective one: tools that help you sharpen structure, strengthen evidence use, improve technical clarity, and prepare a manuscript for submission without ever weakening your control over the work itself.

Choosing an AI tool for academic writing in 2026 entails making a decision about data privacy and editorial scrutiny. Understanding pre-submission review vs. peer review must go hand-in-hand with selecting platforms that meet strict academic-grade tool criteria.

For most early-career researchers, the highest-value tools are those that reduce avoidable weaknesses before submission. A structural feedback tool like thesify helps you actively fix weak analysis patterns and objectively weigh the strengths and weaknesses of your academic argument before those issues trigger harsh reviewer comments.

Similarly, utilizing a methods-focused tool like SciScore ensures your drafting process respects complex AI policies in academic publishing while meeting the strict reproducibility standards expected by review panels.

The broader lesson is simple: use AI to diagnose, compare, organize, and check. Keep the reading, reasoning, and final judgment with the author. That approach aligns more closely with current publisher expectations, preserves academic voice, and supports stronger long-term writing habits.

Turn Draft Friction Into a Clear Revision Plan

If your draft still contains weak transitions, underdeveloped claims, or sections that do not fully align with your research question, address those problems before they reach a supervisor, reviewer, or grant panel. thesify helps you identify structural weaknesses early, turn broad concerns into specific revision tasks, and prepare your manuscript for a more rigorous round of review.

Create a free thesify account and test your draft before submission.

Related Posts:

Grant Writing Strategy: The Reviewer-First Framework | thesify: Increase your grant funding success by reducing reviewer cognitive load. Master the Signal-to-Noise framework to align your research aims and impact. In the current funding climate, "clear writing" is the baseline, not the advantage. With federal grant success rates—such as the NIH R01—consistently hovering between 14% and 16% (NIH Data Book), the real competition has shifted. The winning proposals are no longer just the most "polished"; they are the ones with the highest Signal-to-Noise Ratio.

AI Tools for Academic Peer Review: What They Actually Check in 2026: Publishers and journal editors increasingly utilize highly specialized, enterprise-grade systems to automate the tedious, data-heavy, and administrative aspects of manuscript evaluation. It is crucial to understand that these tools are not typically designed to judge the scientific novelty, creativity, or fundamental value of a research paper. Rather, their primary mechanism of action is the enforcement of compliance with established reporting standards and the detection of integrity violations. Compare AI tools for academic peer review, from methods and reporting checks to pre-submission feedback, ethics, and journal readiness.

AI Policies in Academic Publishing 2025: Guide & Checklist: The landscape of AI in academic publishing is evolving rapidly. Publishers are converging on core principles—human accountability, transparency, and caution over AI‑generated images—yet their policies differ in important details. By following a pre‑submission checklist, understanding each publisher’s requirements and staying alert to journal‑specific variations, you can confidently harness AI’s benefits without jeopardizing your research. This guide covers ethical guidelines and everything you need to know about ai policies in academic publishing 2025 and ai accountability in research.