AI Tools for Academic Peer Review: What They Actually Check in 2026

As manuscript submissions rise and reviewer fatigue continues to strain academic publishing, journals are increasingly using AI to help screen papers before full review. In 2026, AI tools for academic peer review generally fall into two groups:

Journal-side “gatekeepers” that check submissions for reporting, integrity, or methodological issues.

Author-side “coaches” that help researchers improve drafts before submission.

This guide compares the main AI manuscript review tools, what these tools actually check, where they help, and where human judgment still matters. If you are navigating the peer review process, understanding that distinction can help you revise more strategically.

How Journals Now Use AI for Manuscript Screening

The transition to AI-assisted manuscript evaluation has changed what happens before peer review starts. Many journals now use automated screening tools to check submissions for reporting completeness, statistical issues, integrity signals, and journal-compliance problems before the manuscript proceeds further in the editorial workflow. Technical completeness is increasingly treated as a gate to human evaluation, not a minor administrative step. For researchers navigating the traditional peer review process, understanding this change is vital because many avoidable problems are now caught before full review begins.

What Counts as an AI Peer Review Tool?

Definition: In this article, AI tools for academic peer review are systems that help assess a manuscript before, during, or around formal review. Some are used by journals to screen submissions, while others are used by authors to strengthen a draft before submission.

At the broadest level, these tools fall into two groups: journal-side gatekeepers and author-side coaches. In practice, those two groups break down into four main functional types.

Reviewer-Style Manuscript Evaluation Tools

These tools generate structured feedback on claims, argumentation, section quality, or overall submission readiness. They are designed to simulate parts of reviewer-style critique rather than simply edit prose.

Methods and Reporting Check Tools

These tools scan for missing methodological details, reporting gaps, and incomplete alignment with journal or discipline-specific standards. Their job is usually compliance and completeness, not interpretive judgment.

Integrity and Screening Tools

These tools flag issues such as plagiarism, image manipulation, duplicate submission patterns, or other research-integrity risks. They are part of the screening layer, not a substitute for expert review.

Language and Pre-Submission Feedback Tools

These tools help authors improve clarity, structure, and revision quality before formal review begins. For more on where this stage fits, see What Authors Need to Know About Pre-Submission Review vs Peer Review. You can also read our broader guide to Best AI Tools for Academic Research in 2026: A Step-by-Step Workflow Guide.

Publisher-Side Screening Tools: Rigor, Integrity, and Compliance

These publisher-side screening tools function as the gatekeepers of the peer review workflow. Rather than evaluating a manuscript’s overall intellectual contribution, they are designed to check whether a submission is complete, compliant, and technically sound enough to move further into editorial assessment. For authors, understanding these tools helps clarify which problems may be flagged before full peer review begins.

Tool | Primary Check | What It Looks For |

Methodological rigor | Missing RRIDs, blinding, randomization protocols, and other reporting markers tied to reproducibility | |

Statistical and reporting integrity | Incomplete statistical reporting, missing methodological details, and other issues surfaced through automated reporting checks | |

Image integrity | Duplicated, manipulated, spliced, or suspicious figures, including anomalies that are easy to miss manually | |

Technical and reporting compliance | Missing funding or ethics statements, reference mismatches, structural omissions, and formatting problems |

SciScore vs StatReviewer: Automating Rigor and Reproducibility

SciScore and StatReviewer support different parts of publisher-side screening. SciScore focuses on methodological transparency by scanning manuscripts against reporting frameworks such as MDAR, ARRIVE, and CONSORT, then checking whether key design and resource details are actually stated in the methods section. Its reports look for elements such as blinding, randomization, and Research Resource Identifiers (RRIDs), and it generates a rigor score out of 10 based on reporting completeness and resource identification.

StatReviewer, by contrast, is designed to assess the statistical and reporting integrity of a manuscript. Integrated into editorial systems such as Editorial Manager, it runs thousands of automated tests to flag missing reporting elements, statistical issues, and other problems that editors may want addressed before review moves forward. Together, these tools show why aligning your methods section design with formal reporting expectations is now part of submission readiness, not a minor clean-up step at the end.

Proofig AI and the Rise of Image Integrity Checks

Proofig reflects a broader shift in publisher-side screening: image integrity is no longer treated as a niche concern handled only by manual inspection. Its software is designed to detect duplication, reuse, and manipulation within scientific figures, including cases where visual elements have been rotated, scaled, flipped, cropped, or partially overlapped to disguise repetition. That matters because these are exactly the kinds of anomalies a human reviewer may miss when working quickly across large submission volumes. Proofig’s own documentation emphasizes detection of scaling, rotation, flipping, and overlap, while institutional guidance notes that the tool flags potential image-integrity issues for human review rather than making the final misconduct determination itself.

In 2026, Proofig has also positioned more clearly within a wider integrity-screening ecosystem. Its partner materials describe Proofig’s image checks being paired with iThenticate’s text-similarity screening through PubShield, bringing image and plagiarism-related review steps into a single workflow rather than forcing editors or institutions to run separate systems. This does not turn Proofig into a plagiarism detector in its own right. The point is integration: text similarity and image integrity can now be reviewed together, which makes pre-publication screening more comprehensive and easier to manage at scale.

Reviewer Matching and Integrity Systems

Publisher-side AI is not used only to check manuscripts for reporting, integrity, and compliance problems. It is also used to support two closely related editorial tasks:

Identifying the right human reviewers

Detecting high-risk submissions earlier in the workflow.

Reviewer-matching tools such as Prophy and MDPI’s Reviewer Finder move beyond basic keyword search by using semantic analysis to connect a manuscript’s concepts, methods, and publication context with suitable experts. This helps journals identify more specialized reviewers and make reviewer selection less dependent on narrow internal networks.

Alongside these systems, publishers are also expanding their integrity-screening infrastructure. The STM Integrity Hub provides shared checks for issues such as duplicate submissions and other research-integrity signals, while tools such as Proofig focus on image-level anomalies that may not be obvious during manual review. Together, these systems extend publisher-side screening beyond formatting and methods checks alone: one set improves reviewer assignment, while the other helps flag potentially problematic submissions before full review proceeds.

AI Peer Review Assistants for Authors: The Coaches

Because journals increasingly use automated screening tools before full review begins, authors now need stronger pre-submission diagnostics on their side as well. This is where author-side AI tools act as the article’s “coaches.” Rather than checking a manuscript for journal compliance alone, they help researchers identify structural weaknesses, clarify arguments, strengthen evidence use, and revise more strategically before submission.

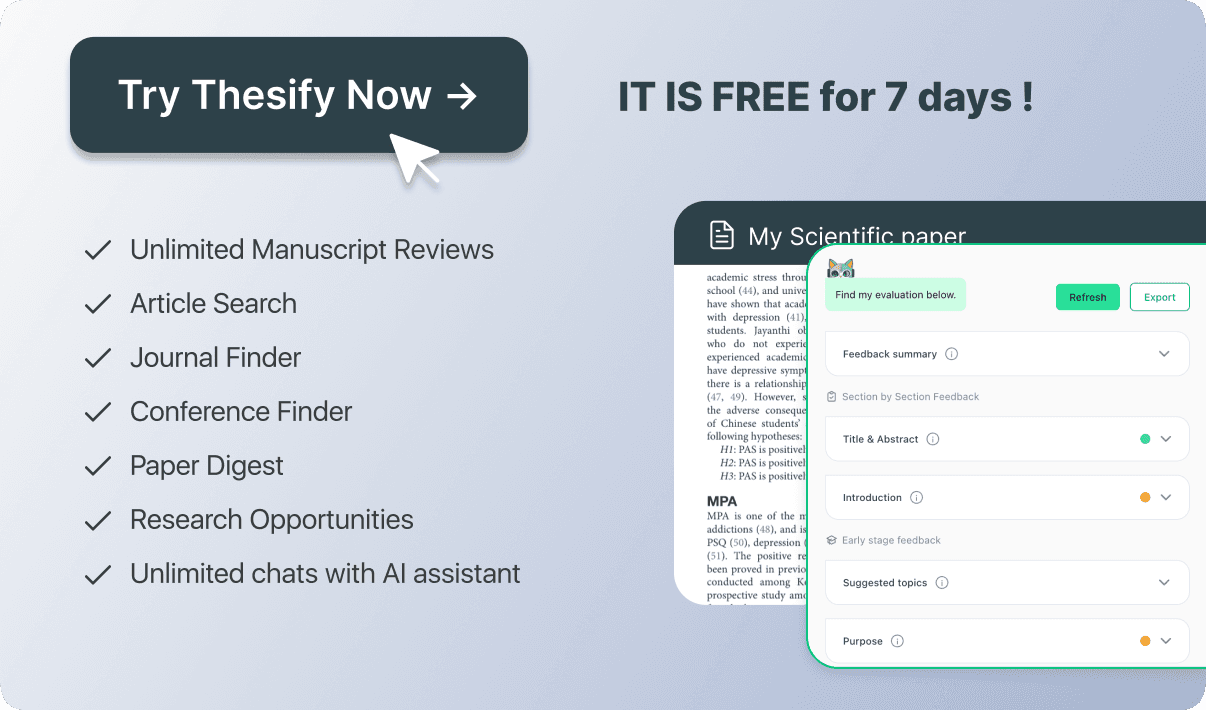

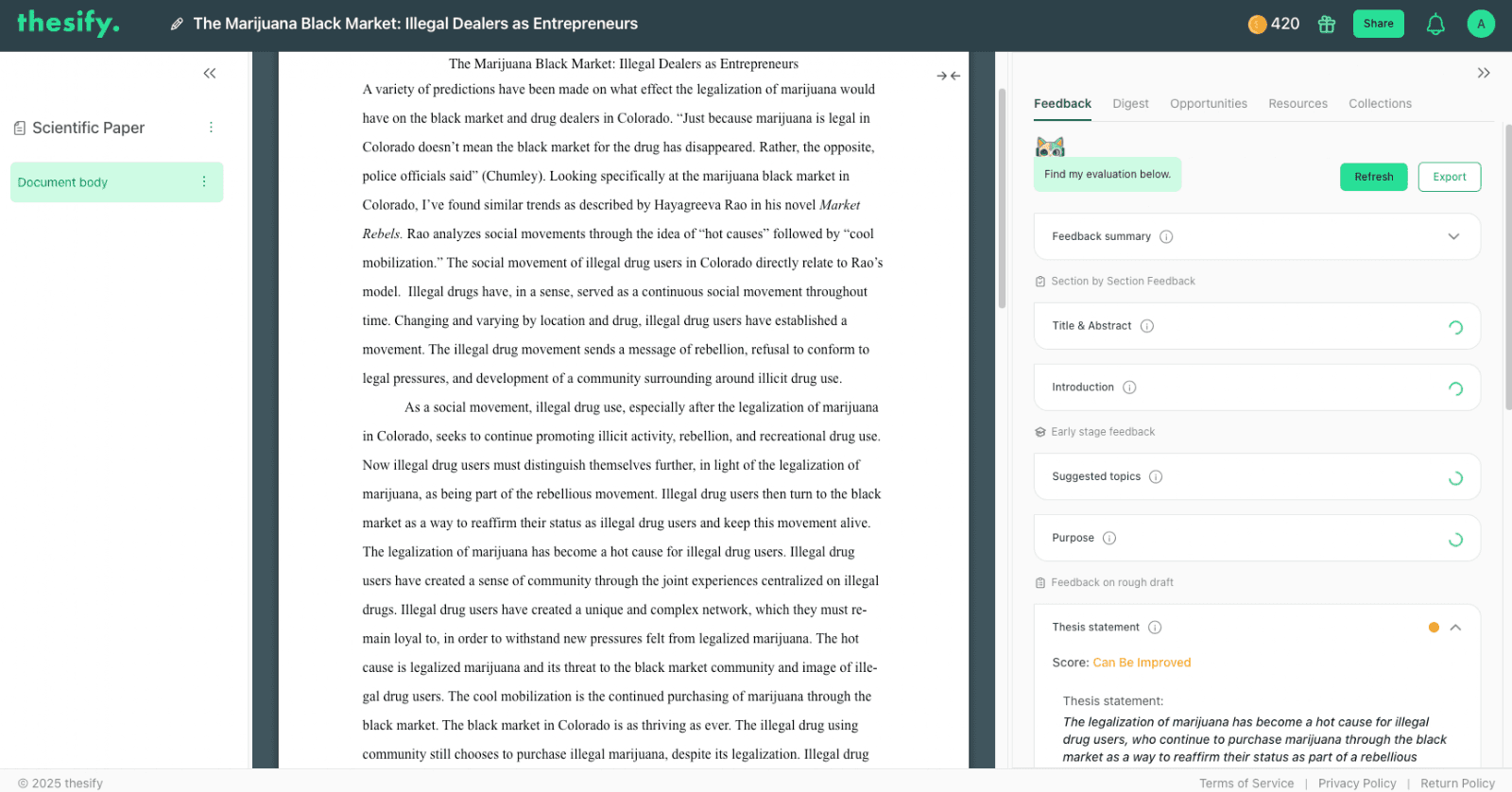

thesify: Pre-Submission Review and Analytical Feedback

thesify functions as a pre-submission feedback tool rather than a proofreading assistant. Its role is to examine the quality of the manuscript’s reasoning by highlighting issues such as weak thesis clarity, gaps in logical flow, underinterpreted evidence, and other weak analysis patterns that may survive technical screening but still create problems in review. By running a pre-submission review, authors can test how clearly the draft presents its argument and how effectively its claims are supported across sections.

thesify functions as an academic coach by evaluating drafts against specific rubric criteria, highlighting exactly where a comparative analysis needs more depth before submission.

thesify helps authors identify structural weaknesses and unresolved reasoning problems earlier in the academic writing workflow. Its downloadable feedback report can make that process more concrete by showing where the draft still needs stronger reasoning, clearer interpretation, or better-supported claims. This makes it particularly useful for identifying and fixing weak evidence before submission.

AI Review Workspaces: Enago Read

Some author-side tools are designed to create a more interactive review environment rather than simply returning a static report. Enago Read fits this category. Its AI Peer Review Workspace is built around human-AI collaboration, combining a Copilot interface for querying the manuscript with an LLM Roundtable architecture intended to cross-check model outputs and reduce low-quality or hallucinated feedback. This places Enago Read closer to a structured review workspace than to a drafting assistant.

Language, Revision, and Literature Discovery Tools

Other author-side tools support manuscript development more indirectly. Paperpal and Writefull are primarily language and revision tools. Paperpal focuses on context-aware academic phrasing, paraphrasing, and drafting support, while Writefull provides language feedback calibrated to academic writing and trained on published journal articles. These tools are most useful when a draft’s main weaknesses involve clarity, tone, or sentence-level revision rather than argument quality alone. These tools should be treated as part of a pre-submission review.

Some literature discovery tools can still support revision before submission, even if they are not peer review tools in the strict sense. Elicit helps researchers synthesize evidence across papers, while ConnectedPapers can clarify how a field is structured and where a manuscript needs stronger literature positioning.

Best AI Tools for Academic Peer Review in 2026

These tools solve different challenges in the peer review workflow, so they should not be treated as interchangeable. Some platforms are built for methodological and statistical screening, some for integrity checks, and others for pre-submission feedback or language revision. The comparison below gives a high-level view of the main tools discussed in this article, what each one is designed to do best, and who it is actually built for.

Feature Comparison: The Best AI Tools at a Glance

Tool / Platform | Primary Use Case | Key Strength | Target User |

Methodological verification | Checks adherence to reporting frameworks such as ARRIVE and MDAR, and surfaces missing rigor markers | Editors / Publishers | |

Automated statistical checks | Flags statistical and reporting issues through automated tests in editorial workflows | Editors / Publishers | |

Pre-submission feedback | Provides deep structural analysis, reviewer-style feedback, and a downloadable AI feedback report | Authors | |

Peer review workspace | Supports human-AI review through Copilot tools and LLM cross-verification via Roundtable | Editors / Reviewers | |

Language refinement | Offers context-aware academic phrasing and drafting support | Authors | |

Academic fraud detection | Helps identify duplicate submissions and other research-integrity signals across publisher workflows | Publishers |

Manuscript Evaluation and Reviewer-Style Feedback Tools

Unlike publisher-side screening systems, reviewer-style tools are designed to interrogate the manuscript itself. Their aim is to simulate parts of reviewer critique by stress-testing claims, surfacing methodological weaknesses, and identifying sections likely to draw objections in review. This is where pre-submission feedback becomes useful for a draft that still needs stronger reasoning, clearer evidence use, or better positioning. For a thesify-specific example of how that feedback can be turned into revision steps, see the downloadable feedback report.

Unlike gatekeeping tools that check for formatting compliance, thesify provides reviewer-style feedback on the structural logic of your draft, such as evaluating the strength of your central thesis.

Best for: Pre-submission review and reviewer-style feedback on structure, argumentation, and section-level revision priorities.

What it checks: thesify’s Pre-Submission Review focuses on clarity, structure, argumentation, thesis quality, evidence use, and adherence to academic standards. Its also offers concrete recommendations, gaps in arguments or evidence, insights on each part of the manuscript, table and figure feedback, and a feedback report that can be shared during revision.

Main limitation: thesify is not built as a citation-verification engine or journal-side compliance checker. Its strength is manuscript revision guidance rather than technical screening.

Who should use it: Authors who need stronger reviewer-style feedback on reasoning, structure, and section-level quality before a human reviewer sees the draft. It is especially useful when the manuscript’s main weakness is not grammar alone, but unresolved logic gaps, weak evidence use, or unclear argumentation.

Manusights

Best for: Full-manuscript review when you want citation verification, figure analysis, and journal-specific readiness scoring in one workflow.

What it checks: Manusights positions itself around submission readiness rather than language polishing alone, with public comparisons emphasizing citation verification against large live databases, figure analysis, and journal-specific readiness scoring.

Main limitation: Its stronger diagnostic and expert-review options sit behind paid tiers, so the most detailed review is not the entry-level experience.

Who should use it: Researchers preparing high-stakes submissions who want a broad technical and reviewer-style diagnostic before journal submission.

Reviewer3

Best for: Rapid reviewer-style feedback on methodology, reproducibility, and context.

What it checks: Reviewer3 uses multiple specialized reviewers to assess study design, statistical approaches, analytical frameworks, data availability, code documentation, and how limitations are positioned within the wider literature.

Main limitation: Public descriptions emphasize methodology, reproducibility, and context, but do not show the same level of citation verification, figure analysis, or journal-specific scoring associated with some competing tools.

Who should use it: Authors who want fast, structured critique on whether their manuscript reads like a credible study rather than just a polished draft.

q.e.d Science

Best for: Stress-testing claim structure and identifying gaps between evidence and conclusions.

What it checks: q.e.d Science analyzes the claims and supporting data in a manuscript, identifies gaps that may require revision or further work, and compares the paper with other publications to comment on originality and positioning. Its bioRxiv integration also makes it distinctive within this group.

Main limitation: Its strength is logical decomposition and claim mapping, not full field-specific judgment, so authors still need to evaluate whether the feedback makes sense in disciplinary context.

Who should use it: Authors who want to test whether the paper’s claims are actually supported and whether its novelty claims are proportionate before formal review.

PaperReview.ai

Best for: Fast, free first-pass feedback on short computer science or machine learning papers.

What it checks: PaperReview.ai, presented as the Stanford Agentic Reviewer, converts a paper into structured text, grounds its review in related work, and produces feedback across multiple review dimensions.

Main limitation: It reads only the first 15 pages and is strongest in arXiv-heavy fields such as CS and ML, so it is not a full-manuscript solution across disciplines.

Who should use it: Authors in CS or ML who want a fast, free early-stage critique and understand that it is a triage tool rather than a complete submission review.

Methods and Reporting Check Tools

These tools check compliance and completeness, not argument quality alone. Some are publisher-side systems that test whether a manuscript meets formal reporting and submission requirements. Others, like thesify, work earlier in the workflow by helping authors strengthen how methods, results, and discussion sections are written before the manuscript reaches formal screening. This is where methods section feedback and in-depth methods, results, and discussion feedback matter: the issue is often not only what the study did, but whether the manuscript makes those steps visible, interpretable, and review-ready.

Tool | Best For | Main Limitation |

Checking methods transparency and adherence to reporting standards such as MDAR, ARRIVE, and CONSORT | It assesses reporting completeness, not whether the research question, design logic, or interpretation is persuasive | |

Auditing statistical and reporting integrity within editorial workflows | It is strongest where reporting follows standard structures and guidelines, rather than highly unusual or unconventional formats | |

Checking journal-specific technical readiness at the revision stage | It depends on structured manuscript features and journal requirements, so its usefulness is tied closely to how the submission is formatted and configured | |

Strengthening methods, results, and discussion sections before submission | It helps authors improve section-level reasoning and reporting, but it is not a journal-side compliance engine |

SciScore

Best for: Ensuring that the methods section documents the reporting details needed for transparency and reproducibility.

What it checks: SciScore assesses manuscripts against criteria drawn from reporting frameworks such as MDAR, ARRIVE, and CONSORT, and looks for elements such as rigor criteria and key resource identification, including RRIDs. It generates a reporting score to indicate how completely those features are documented.

Main limitation: SciScore is a transparency checker, not a reviewer of scientific reasoning. It cannot determine whether the research question is interesting, whether the design is conceptually strong, or whether the conclusions are proportionate to the evidence.

Who should use it: Authors in fields where reproducibility and reporting standards are central, especially in life sciences and medicine.

StatReviewer

Best for: Automated checks on statistical and reporting integrity before or during editorial review.

What it checks: StatReviewer is integrated into editorial systems such as Editorial Manager and is described as a decision-support tool that analyzes statistical and reporting integrity. It runs automated tests and flags issues editors and reviewers may want addressed before review proceeds.

Main limitation: Its strength lies in systematic checking, not interpretive judgment. It is most useful for surfacing reporting and statistical issues, not for deciding whether a manuscript’s argument is original or convincing.

Who should use it: Journals and authors who want stronger statistical reporting discipline before human review deepens.

Penelope

Best for: Technical prechecks tied to journal requirements and submission readiness.

What it checks: Penelope is an automated manuscript checker that tests whether a submission meets journal requirements. Its checks include ethics statements, informed consent, data access statements, conflicts of interest, citations and references, keywords, word counts, and other structural or declaration-based requirements.

Main limitation: Penelope is highly effective for formal completeness, but it does not evaluate the intellectual quality of the work. Its performance also depends on being able to identify the manuscript’s relevant structural elements and journal-specific requirements.

Who should use it: Authors and journals that want to catch missing declarations, formatting problems, and structural omissions before a manuscript reaches deeper editorial review.

thesify

Best for: Improving methods, results, and discussion sections before submission through section-level academic feedback.

What it checks: thesify helps authors identify reporting gaps, unclear procedures, underexplained results, and weak interpretation across core scientific sections. Rather than functioning as a formal journal checker, it supports revision by showing where the draft still needs stronger explanation, transparency, or analytical follow-through.

Main limitation: thesify is not a publisher-side compliance engine and does not replace tools built specifically for journal declarations, plagiarism screening, or automated statistical audits.

Who should use it: Authors who want to strengthen section-level clarity and reviewer readiness before the manuscript enters technical screening or external review.

Author-side AI tools like thesify simulate reviewer-style critique by providing concrete, actionable suggestions to improve methodological transparency and statistical reporting before journal submission.

Integrity and Screening Tools

Integrity screening tools are part of the peer review ecosystem, but they do not replace content evaluation. Their role is to flag signals of overlap, manipulation, or coordinated misconduct before or during editorial review. They help journals detect risk. They do not tell editors whether a paper’s argument is original, persuasive, or methodologically well interpreted.

Similarity Check / iThenticate

What it flags: Text overlap against a large scholarly database through Crossref’s Similarity Check service, which is powered by iThenticate and used to support originality screening in editorial workflows.

What it does not tell you: A similarity report is not the same as a plagiarism verdict. It does not decide whether overlap is legitimate quotation, routine methods language, poor citation practice, or misconduct. It also does not evaluate argument quality or intellectual contribution. If you want the broader student-facing context around automated scrutiny, read How Do Professors Detect AI in 2026? Tools, Accuracy, and False Positives.

Proofig

What it flags: Image-level anomalies such as duplication, reuse, rotation, scaling, flipping, overlap, and other suspicious visual patterns that may be difficult to catch in manual review alone.

What it does not tell you: It does not prove misconduct on its own, and it does not verify whether the underlying raw data are valid. It flags image-integrity concerns for further human assessment.

STM Integrity Hub

What it flags: Shared integrity signals across publishers, including issues such as duplicate submissions and patterns associated with paper-mill or manipulated-submission activity.

What it does not tell you: It does not evaluate the quality of the research question, the depth of the analysis, or whether the paper makes a meaningful contribution. Its purpose is risk detection, not scholarly judgment. For a related discussion of why integrity cannot be reduced to a fast AI shortcut, read: The Impact of Generative AI on Student Learning: Why the OECD Warns Against "Fast AI".

Language and Pre-Submission Revision Tools

At this stage of the workflow, the main distinction is not between “good” and “bad” AI tools, but between tools built for language polishing and tools built for manuscript revision. Paperpal, Writefull, and Trinka are primarily language-and-editing tools. thesify, by contrast, is stronger on structure, logic, section-level feedback, and revision guidance before external review. This is where AI writing feedback for researchers becomes more useful than sentence-level correction alone, especially when a draft still needs stronger results section feedback, discussion section feedback, or title and abstract optimization.

Tool | Best For | Not Designed For |

Pre-submission review, structural logic, section-level feedback, and revision planning | Line-level grammar correction as its primary function | |

Real-time language polishing, rewriting support, and academic drafting assistance | Deep analytical critique of argument structure or section-level reasoning | |

Academic language feedback tailored to research writing | Evaluating whether the manuscript’s claims, structure, or interpretation are convincing | |

Academic and technical grammar correction, style improvement, and journal-support utilities | Auditing argument quality, conceptual stakes, or reviewer-style weaknesses in the draft |

thesify

Best for: Pre-submission review AI that helps authors revise the manuscript as an argument rather than as a set of sentences.

What it checks: thesify’s pre-submission review and section-level tools focus on clarity, structure, argument gaps, evidence use, and revision priorities across core sections. thesify emphasizes feedback on Methods, Results, and Discussion, along with concrete recommendations, missing evidence or references, and exportable feedback reports that can support revision planning.

Not designed for: Automated proofreading alone. Its role is not to function as a general-purpose grammar checker, but to help authors improve reasoning, interpretation, and reviewer readiness during late-stage drafting.

Who should use it: Authors who need stronger section-level revision, clearer logic, and more structured pre-submission feedback before a human reviewer sees the draft.

Unlike basic grammar checkers, author-side AI tools like thesify provide a comprehensive reviewer-style summary, helping you identify methodological and analytical gaps before your manuscript faces publisher gatekeepers.

Paperpal

Best for: Real-time drafting and editing support during manuscript development.

What it checks: Paperpal offers grammar checks, rewriting support, vocabulary suggestions, translation, citation-related features, and submission-readiness checks aimed at academic writing workflows.

Not designed for: Deep structural or analytical critique of the manuscript’s argument. Its strength is language refinement and workflow support rather than reviewer-style evaluation of reasoning.

Who should use it: Researchers who want context-aware phrasing help and drafting support while the manuscript is still being written.

Writefull

Best for: Sentence-level academic phrasing and language feedback calibrated to research writing.

What it checks: Writefull provides language feedback using models trained on millions of journal articles, and offers tools for revising phrasing, paraphrasing, and working directly inside academic writing environments such as Word.

Not designed for: Evaluating whether the manuscript’s logic, section structure, or central claims are strong enough for review. Its focus is language quality, not manuscript judgment.

Who should use it: Researchers, especially those working in a second language, who want academic language support that reflects published research writing conventions.

Trinka

Best for: Academic and technical grammar correction with privacy-conscious writing support.

What it checks: Trinka presents itself as a privacy-first grammar and language assistant for academic and technical writing, with tools that extend into citation support, journal finding, and related submission utilities.

Not designed for: Auditing whether a manuscript’s claims matter, whether the reasoning is persuasive, or whether the section-level interpretation is strong enough for peer review.

Who should use it: Researchers who want formal language correction and technical writing support, especially when submission preparation requires both editing and journal-facing utilities.

Navigating the Ethics of AI in Peer Review

AI use in peer review raises specific ethical questions about confidentiality, accountability, and the limits of automation in editorial decision-making.

Confidentiality and the Risk of Uploading Unpublished Drafts

Major publishers have drawn a clear line on this issue. Elsevier states that reviewers should not upload manuscripts, peer review reports, or editorial letters into AI tools because doing so may breach confidentiality and data-privacy obligations. Springer Nature likewise instructs peer reviewers not to upload manuscripts into generative AI tools and emphasizes that manuscripts contain confidential and sensitive information. This is why ethical AI use in peer review depends not just on what a tool can do, but on whether it can be used without exposing unpublished work to public or non-compliant systems. For a broader framework, see adhering to ethical use cases of AI in academic writing.

Accountability and the Human-in-the-Loop Requirement

COPE’s guidance remains clear on authorship and responsibility: AI tools cannot qualify as authors because they cannot take responsibility for the work, and humans remain accountable for the accuracy, integrity, and final judgment involved in publication decisions. In practical terms, that means AI is not a peer. It can assist with diagnostics, organization, or revision support, but it cannot assume authorship, ethical responsibility, or editorial accountability.

Researchers, reviewers, and editors must therefore treat AI outputs as inputs to human judgment rather than substitutes for it. Used carelessly, these tools can encourage over-reliance and cognitive offloading; used carefully, they can support revision without displacing responsibility for the final manuscript.

Are AI Peer Review Tools Ethical in Academia?

AI peer review tools can be used ethically in academia, but only within clear limits. The central question is not whether AI appears somewhere in the workflow. It is whether the tool protects confidentiality, preserves human judgment, and keeps responsibility with the researcher, reviewer, or editor. Current guidance from COPE and major publishers makes that boundary explicit, especially in peer review, where manuscripts and review materials are confidential by default.

When evaluating ethical AI in academic writing, use this checklist:

Privacy and retention: Do not upload unpublished manuscripts, review reports, or editorial letters into public generative AI tools. Elsevier and Springer Nature both state that reviewers should not upload manuscripts into such systems because of confidentiality, proprietary-rights, and data-privacy risks.

Transparency: If AI is used in a way that affects manuscript preparation or review support, its role should be declared according to journal or publisher policy. Springer Nature states that reviewers must disclose any use of generative AI tools in the peer review report.

Hallucination risk: AI outputs can sound plausible while still being wrong. Reviewers and authors must verify claims, citations, summaries, and critiques rather than assuming the tool is accurate. COPE’s guidance on AI and authorship is grounded in this larger accountability problem.

Over-reliance: A useful diagnostic tool can still become a bad shortcut if it starts replacing independent judgment. This is where the difference between careful use and a fast AI shortcut matters most.

Disclosure expectations: Humans remain fully responsible for the accuracy, integrity, and final evaluation of the work. AI can assist, but it cannot take ownership of a manuscript or a review.

Where AI Can Support Review Responsibly

Used well, AI can support review by handling diagnostic and organizational tasks that are time-consuming but still rule-based enough to benefit from automation. That includes reporting checks, statistical and integrity screening, manuscript triage, and structured revision support before formal peer-review. In this role, AI is assisting human evaluation, not substituting for it. This is also where tools should be judged against the criteria for academic-grade AI tools, especially around privacy, traceability, and research-specific fit.

Where Human Judgment Still Cannot Be Outsourced

Human judgment still cannot be outsourced in any area that depends on accountability, disciplinary interpretation, or ethical reasoning. COPE states that AI tools cannot qualify for authorship because they cannot take responsibility for the submitted work. The same logic applies to peer review: AI is not a peer, because it cannot be accountable for an editorial recommendation, a scientific judgment, or an ethical decision. Researchers, reviewers, and editors therefore remain fully responsible for the accuracy and soundness of anything AI helps produce.

What to Check Before Uploading a Draft

Before uploading a draft to any tool, check whether the system is suitable for confidential academic work. At a minimum, you should know how the platform handles privacy, whether content is retained, whether outputs are traceable enough to verify, and whether the workflow encourages revision rather than dependence. This matters not only for compliance, but also for intellectual ownership. A tool that helps you think more clearly is serving a different function from one that encourages speed at the cost of interpretation, originality, or accountability.

How to Choose the Right AI Peer Review Tool for Your Workflow

Choosing the right tool depends on what problem you are trying to solve. The best AI tools for academic peer review are not interchangeable. Some are built for technical checks, some for integrity screening, some for reviewer-style critique, and some for revision support before submission.

If you need methods and reporting checks:

Use SciScore, StatReviewer, or Penelope. These tools are useful when your main concern is reporting completeness, methodological transparency, statistical consistency, or journal-specific submission requirements. They are strongest when you need to make sure the manuscript is technically ready for screening, not when you need help improving the argument itself.

If you need integrity screening:

Use Similarity Check / iThenticate, Proofig, or, at the publisher level, the STM Integrity Hub. These tools are designed to flag text overlap, image anomalies, duplicate submissions, or broader integrity signals. They are useful when the main risk is ethical or technical screening, but they do not tell you whether the paper’s reasoning is strong.

If you need reviewer-style critique:

Use Manusights, Reviewer3, q.e.d Science, or PaperReview.ai, depending on your field and manuscript stage. These tools are better suited to stress-testing claims, surfacing methodological weaknesses, and identifying the kinds of objections a reviewer may raise. This is the right choice when you want something closer to manuscript evaluation than compliance checking.

If you need revision feedback before formal peer-review:

Use thesify when the draft needs stronger structure, logic, and section-level revision rather than sentence-level editing alone. Tools like Paperpal, Writefull, and Trinka are more useful for language refinement, while thesify is better suited to authors who need chapter-level revision and feedback before external review. If you want the broader workflow context, see our guide to the best AI tools for academic research.

The practical rule is simple: match the tool to the weakness in the draft. If the problem is compliance, use a checker. If the problem is integrity, use a screening tool. If the problem is likely reviewer objections, use reviewer-style critique. If the problem is that the manuscript still needs stronger reasoning before submission, use pre-submission review AI that supports revision.

Checklist: Preparing Your Draft for Automated Review

Use this checklist before your submit to catch issues that automated screening tools often flag first:

Unique Identifiers: Include RRIDs for all relevant cell lines, antibodies, software, and other research resources.

Reporting Standards: Check that your abstract and core sections follow the required structure for frameworks such as CONSORT or PRISMA, where relevant.

Logical Bridges: Make sure factual claims do not stop at description. Each one should make clear why the point matters.

Declaration Completeness: Label funding, ethics, conflict of interest, and data access statements explicitly so they are easy to identify.

Where thesify Fits in the AI Peer Review Workflow

thesify fits most naturally in the author-side revision stage, after you have a complete draft but before external review begins. It is not a journal-side screening tool, and it is not primarily a language polisher. Its role is to provide pre-submission feedback on structure, argumentation, evidence use, and section-level weaknesses that may still create problems once a manuscript reaches human reviewers. The platform’s Pre-Submission Review is explicitly framed around clarity, structure, argumentation, adherence to academic standards, and concrete recommendations for revision.

thesify helps authors stress-test their claims by evaluating the Discussion section to ensure that drawn implications are realistic, grounded in data, and appropriately cautious before peer review.

This makes thesify a better fit when the manuscript contains unresolved logic gaps, weak interpretation, or uneven section quality. In that sense, it sits alongside journal-facing tools without trying to replicate them: SciScore, StatReviewer, and Penelope are designed to test compliance and completeness, while thesify is better suited to revision before that screening begins.

If you are working section by section, the most relevant starting points are Methods Section Feedback in thesify, Scientific Paper Results Section Feedback: How to Audit Your Results, and Scientific Paper Discussion Section Feedback: How to Stress-Test Your Claims.

thesify’s strongest use case is therefore not “AI writer,” but AI feedback for academic writing that helps you revise with a clearer sense of where the draft is vulnerable. thesify’s reviews for gaps in arguments, evidence and references, part-by-part manuscript insights, and a downloadable feedback report that can be shared during revision.

Conclusion: Moving Beyond Compliance to Persuasion

AI is now part of the peer review ecosystem, but not all tools are doing the same work. Publisher-side systems help journals check reporting completeness, integrity signals, and technical readiness. Author-side tools are more useful when a manuscript still needs stronger structure, clearer interpretation, or more defensible claims. Human judgment remains the point at which novelty, significance, and scholarly value are actually assessed.

That distinction matters because passing technical screening is not the same as persuading a reviewer. Gatekeeper AI confirms that key elements are present, but human-focused tools like thesify help you strengthen the argument those elements are meant to support. If your draft is complete but still vulnerable in its reasoning, evidence use, or section-level clarity, the next step is running a pre-submission review before the manuscript reaches external review.

Final Takeaways

Not all AI peer review tools do the same job

Some screen for methods, reporting, or integrity. Others provide reviewer-style critique or revision support.

Compliance tools, integrity tools, and revision tools solve different problems.

Choosing well depends on whether your draft needs technical readiness, risk screening, or stronger argumentation.

Human judgment still matters.

AI can assist with diagnostics and workflow support, but accountability for interpretation, accuracy, and final evaluative decisions remains human.

Sign Up for thesify for Free to Strengthen Your Draft Before Review

Before you submit, sign up to thesify to check how well your manuscript holds together across structure, evidence, and section-level reasoning.

Related Posts:

What Authors Need to Know About Pre-Submission Review vs Peer Review: Find out more about the role of AI in pre-submission and peer review. Artificial intelligence is changing scholarly publishing. Automated tools can assist with language editing, highlight structural issues and even generate summary reviews. However, there is growing concern about mislabelling these tools as “peer review” and about their appropriate use. Get clear on which tools support editors but do not replace the role of human reviewers. Learn about how the final assessment of scientific merit, originality, and suitability for publication remains the responsibility of human editors and peer reviewers.

Comparing AI Academic Writing Tools: thesify vs Enago Read: Compare thesify vs Enago Read for AI-based academic writing support. Explore features, pricing and test results to see which tool fits your writing needs. Learn why AI writing feedback tools matter for academic writing. Get a full feature comparison: digests, research feedback, and AI features for thesify vs. Enago Read. Plus, check out a published article test that evaluates thesify and Enago Read for research paper digests.

AI Writing Review Showdown: paperpal vs thesify: Finding the right AI writing reviewer can change how quickly and confidently you refine your research abstracts. To help you choose, we put Paperpal and thesify head-to-head on the same sociology abstract and compared their reviewer and chat features. Both tools offer broader capabilities, but here we focus solely on how well their reviewers analyze and improve a draft.