The Impact of Generative AI on Student Learning: Why the OECD Warns Against "Fast AI"

A striking finding in the OECD Digital Education Outlook 2026 is that students who practised maths with a generic chatbot performed better in the moment, yet scored up to 17% worse on subsequent closed-book exams than peers who studied alone. That gap captures the core tension in the current debate about the impact of generative AI on student learning. Adoption is rising quickly, but when students use "fast AI" to generate answers and polished text on demand, they can end up with an "illusion of competence" without the underlying understanding.

If you are worried about how “AI-written” is judged in practice, including false positives, see our guide on how professors detect AI in 2026.

This post unpacks why that happens. You will see how generic chatbots can encourage cognitive offloading, where key learning steps like diagnosing what you do not understand, testing your reasoning, and revising based on feedback get skipped. You will also see why the OECD argues for a shift toward "slow AI," meaning tools and workflows that keep you cognitively active through iteration, questioning, and scaffolded support, rather than one-shot generation.

This post explores how generic chatbots encourage cognitive offloading—skipping critical learning steps like diagnosing knowledge gaps, testing reasoning, and revising based on feedback. Instead of this "fast AI" shortcut, the OECD argues for a shift toward "slow AI": purpose-built tools, like thesify, that keep students cognitively active through iterative, Socratic feedback while making the grading process more manageable for educators."

The Illusion of Mastery: When AI Becomes a Crutch

One of the clearest patterns in the OECD’s evidence is a paradox common in modern academic settings: students can produce higher-quality work with generative AI, yet actually learn less.

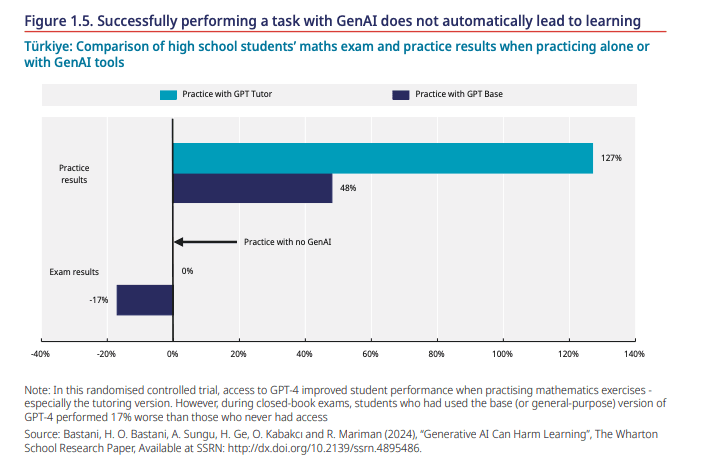

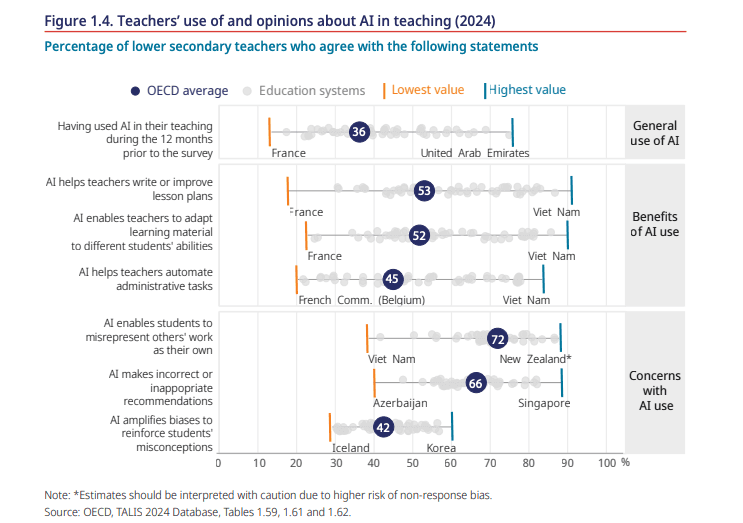

In a large-scale randomised trial involving high school students in Türkiye, access to GPT-4 improved performance during practice by up to 127%. However, those using the general-purpose version ultimately performed 17% worse on subsequent closed-book exams than peers who never had access to the tool.

The Performance-Learning Paradox: Data from a randomised controlled trial in Türkiye shows that generic AI tools can significantly boost immediate practice results while simultaneously harming long-term retention and exam performance. (Image source)

This finding suggests that evaluating the impact of generative AI on student learning means looking past polished outputs to see if actual knowledge retention and skill transfer occur when the technology is removed.

Definition: The Impact of "Fast AI" on Learning

Cognitive Offloading: The act of using external tools to reduce the mental effort required to perform a task. While this can improve task performance in the short term, it risks displacing the cognitive effort and "productive struggle" needed to build durable skills and deep understanding.

Metacognitive Laziness: A specific form of cognitive offloading where a learner bypasses critical self-regulatory processes—such as diagnosing needs, evaluating information, and iterating on ideas—by uncritically accepting and implementing AI-generated solutions.

Metacognitive Laziness and Cognitive Offloading

When students use generic chatbots as a first resort, the most significant shift is not stylistic; it is cognitive. Many "fast AI" interactions encourage students to skip the vital steps that actually produce learning: diagnosing what they do not understand, testing whether an explanation is correct, and evaluating whether a proposed solution fits the task requirements.

Instead of working through uncertainty, students often jump straight to solution acquisition, implementing the response immediately. The OECD describes this pattern as metacognitive laziness in education, a form of cognitive offloading where the tool performs the reasoning work that the assignment was originally meant to elicit.

This matters because these diagnostic and evaluative steps are the exact mechanisms that transform information into durable understanding. In academic writing, for example, the process is typically iterative: you draft a claim, assess whether your evidence actually supports it, and refine your logic. If a chatbot supplies a polished paragraph, you bypass that loop entirely. While the output may look coherent, the underlying skills critical to identifying research gaps—such as argumentation, synthesis, and methodological clarity—are never actually practised.

Over time, this reliance has two predictable consequences for students:

Fragile Writing Quality: Students who rely on AI for structure, transitions, and argument logic often weaken their ability to generate and defend claims independently. The OECD highlights how AI-supported writing can reduce recall and a sense of ownership, leading to homogenised outputs across student cohorts. In academic contexts, this "sameness" prevents students from developing a distinctive voice and makes papers feel generic to reviewers.

Increased Academic Integrity Risks: Even without an intent to cheat, integrity risks rise when students cannot distinguish between support (brainstorming or revision prompts) and substitution (AI producing the core work). Teachers report growing concerns that AI enables students to misrepresent work, which may eventually undermine trust in the entire assessment process.

When the learning process is replaced by mere output generation, academic integrity becomes harder to define, enforce, and teach.

From “Fast AI” to “Slow AI”: The OECD’s Vision

The OECD’s core recommendation is not to “ban generative AI”. Instead, it is to stop treating general-purpose chatbots as neutral learning tools and move toward purpose-built educational AI designed around pedagogy. The report is explicit that generic systems carry significant risks for learning unless they are used with clear instructional intent or redesigned as educational tools that support skill acquisition rather than just answer production.

This distinction is vital because the issues previously described, including metacognitive laziness in education, are predictable outcomes of tools optimised for fluent output rather than meaningful learning processes.

The “Slow AI” design principle

The OECD introduces “slow AI” logic as a core learning design principle rather than a technical category. It refers to using AI in ways that keep students cognitively active through iteration, questioning, testing assumptions, and reflecting.

What is "Slow AI"?

Slow AI is an academic workflow where technology acts as a learning partner rather than an answer engine. It requires students to engage in iterative steps—such as generating alternative explanations, surfacing counterarguments, or justifying choices—to ensure the human remains the primary driver of the reasoning process.

Instead of a “fast AI” approach—typing a prompt and accepting the first polished result—the OECD identifies more promising outcomes when GenAI is combined with explicit pedagogical models, such as structured tutoring strategies or tools configured to guide learning rather than provide direct solutions.

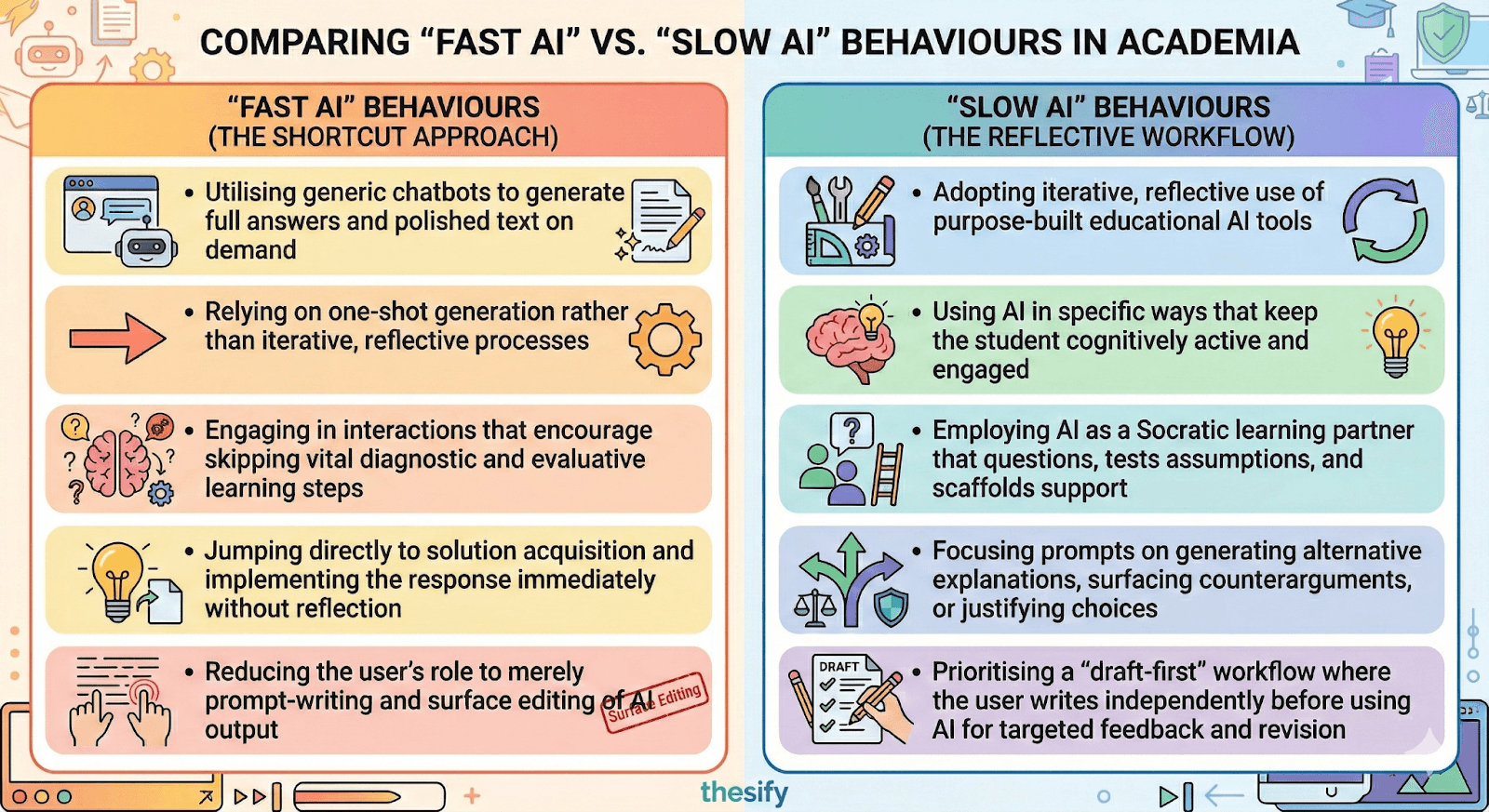

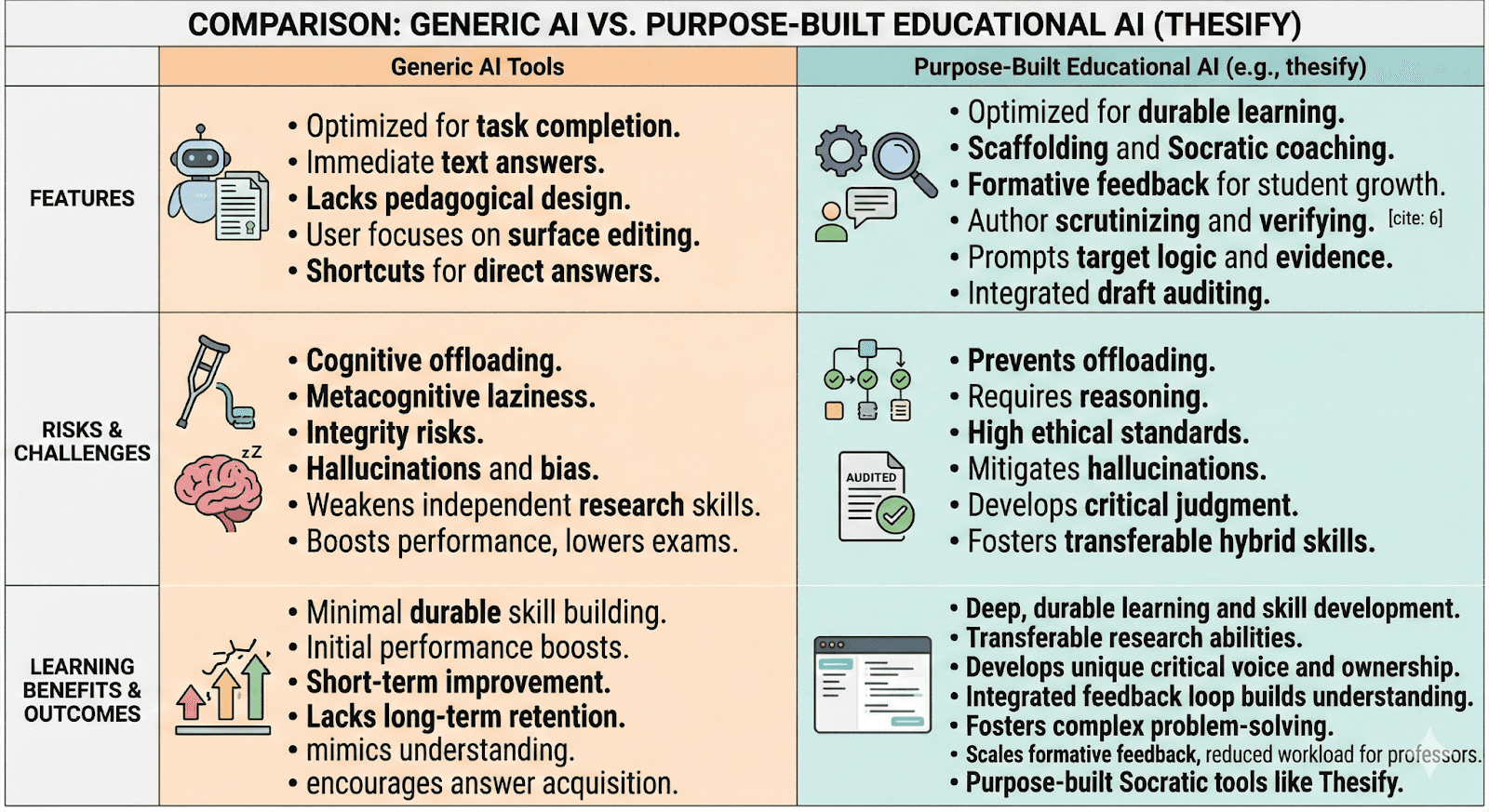

Choosing Your Workflow: While "Fast AI" prioritises immediate task completion at the cost of retention, "Slow AI" behaviours foster the hybrid human-AI skills necessary for durable learning and academic success.

FAQ: Does generative AI always harm learning?

No. The OECD’s concern is primarily about fast AI use, where students offload reasoning and skip evaluation. Learning outcomes look more promising when AI use is structured, iterative, and aligned with pedagogy, for example through Socratic prompting and guided revision. The difference is whether AI replaces the learning process or supports it.

Developing Hybrid Human-AI Skills

Successful AI integration depends on a division of labour where the human remains responsible for the "judgment" layer of work. This requires the cultivation of hybrid human-AI skills: a suite of metacognitive, critical, and ethical competencies that enable students to leverage AI for performance without sacrificing their own skill development.

In this framework, the researcher remains responsible for:

Deciding what counts as valid evidence.

Verifying sources and ensuring the work aligns with your institution’s standards for ethical AI use.

By designing workflows where the student remains the author and the AI supports revision and reflection, academic integrity becomes teachable again.

Ethics and Necessary Oversight

“Slow AI” also requires strict adherence to ethical guardrails. The OECD highlights persistent concerns regarding bias, the risk that AI can reinforce misconceptions, and the potential for compromised data security. To mitigate these, the report emphasises that users must never input sensitive data, must always verify factual claims, and must keep human judgment at the centre of all feedback and grading processes. Building these AI literacy and digital skills in higher education is now a prerequisite for responsible research.

For professor-facing policy templates and assessment practice, see Supervising with AI in 2025.

Educational AI in Action: How thesify Prevents Cognitive Offloading

The OECD’s core message is that if generative AI is going to support learning, it needs to be designed and used as a learning partner, not a shortcut. That is why the report repeatedly distinguishes between general-purpose chatbots and purpose-built educational systems that structure interaction around pedagogy, for example through tutoring strategies and evidence-centred assessment design. In practice, this shifts the question from “Which model is smartest?” to “Which workflow keeps the student cognitively responsible for the work?”

If you are deciding what to use in practice, see Choosing the Right AI Tool for Academic Writing: thesify vs. ChatGPT.

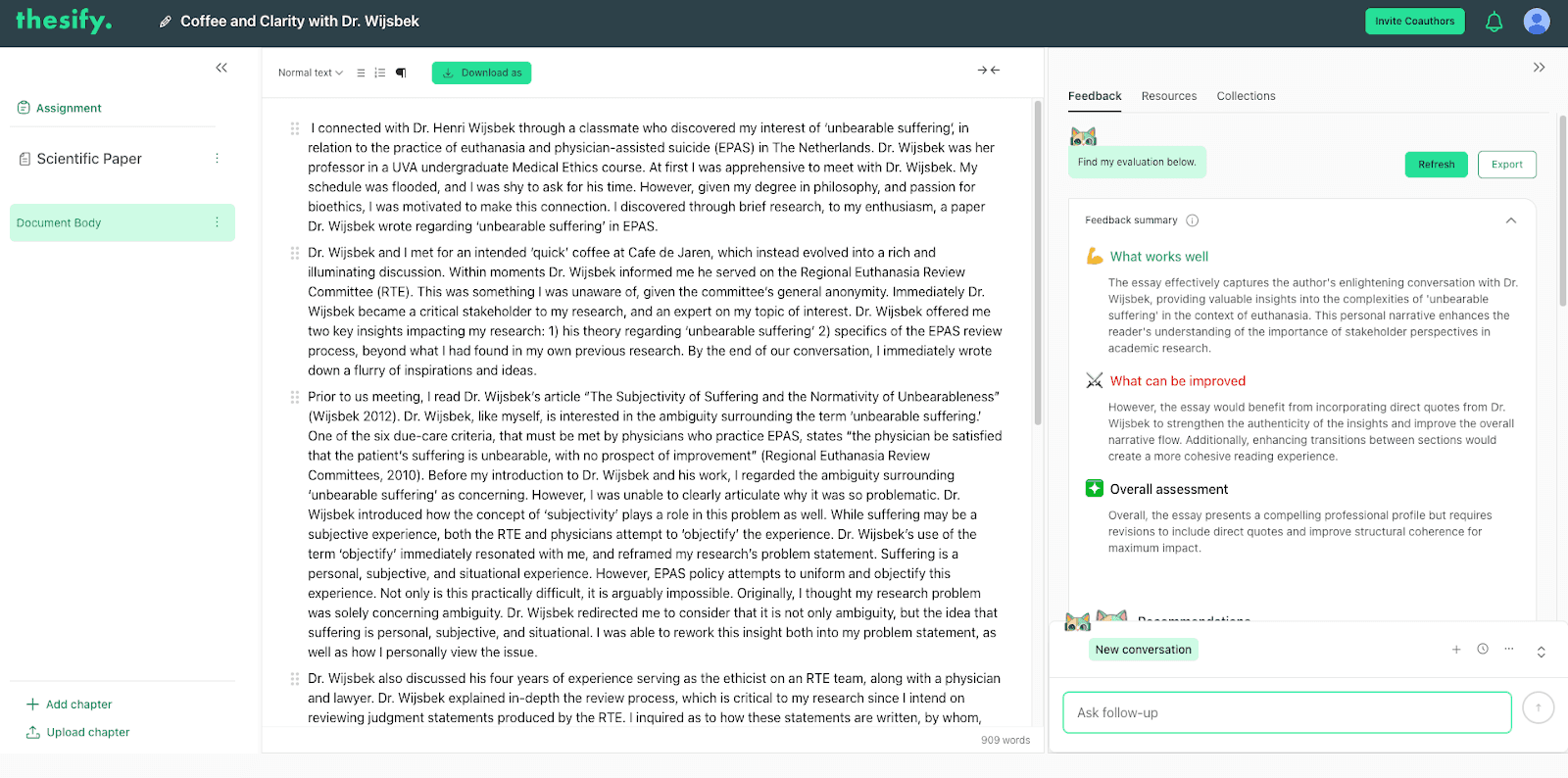

This is where educational AI tools like thesify become more relevant than generic chatbots for academic writing. The core design choice is simple: thesify is built to audit and coach drafts rather than write them. Instead of generating a full essay or rewriting a paragraph into a polished final, thesify focuses on what academic work actually requires: a clear claim, defensible reasoning, appropriate evidence, and alignment with disciplinary expectations. That audit-first approach matters because it reduces cognitive offloading at the most harmful point in the writing process, when students would otherwise outsource the thinking that produces understanding.

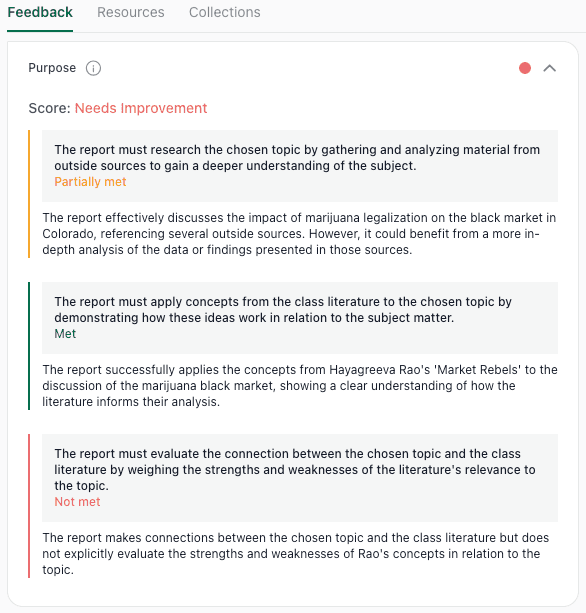

Example of thesify’s feedback summary, highlighting strengths and revision priorities to support formative feedback and reduce cognitive offloading.

In “fast AI” workflows, the student’s job can shrink to prompt-writing and surface editing. That is exactly the pattern the OECD associates with metacognitive laziness: skipping diagnosis, evaluation, and iteration, then implementing the solution immediately.

In contrast, a draft-auditing workflow forces a different sequence. You write first, then the tool points to weaknesses and asks you to make targeted revisions. Done well, this preserves ownership, keeps the student as the author, and builds hybrid human-AI skills where the AI supports reflection but the human makes the judgement calls.

How formative feedback operationalises “slow AI”

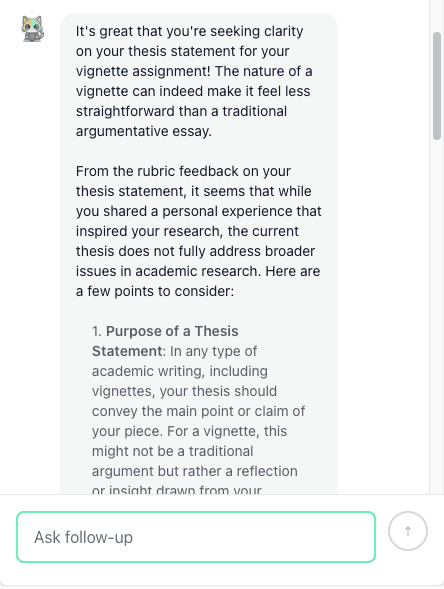

The OECD notes that more promising outcomes appear when GenAI is embedded in explicit pedagogical models, including Socratic-style tutoring and structured guidance. That maps closely to what formative feedback is supposed to do in academic writing: not deliver the answer, but help you see what you need to improve and why.

Choosing the Right Tool: While generic AI is designed for efficiency and answer delivery, purpose-built educational AI tools like thesify focus on scaffolding, formative feedback, and protecting the cognitive struggle necessary for genuine learning.

In practical terms, formative feedback that prevents offloading has three characteristics:

It diagnoses, rather than replaces.

It tells you what is missing (a warrant, a definition, a methodological justification) rather than supplying a finished paragraph. For design, sampling, and replicability checks, see Methods Section Feedback in thesify.

It prompts iteration.

It encourages you to revise in steps, testing whether your evidence supports your claim and whether your interpretation matches your results.

It preserves agency.

It keeps you responsible for decisions: what sources to cite, what claims you can defend, and how you justify choices.

That is the logic behind using thesify as a Socratic partner. The feedback should behave less like “here is the final version” and more like “what is your claim, what is your evidence, what assumption are you making, what would a reviewer push back on?”

This is also more aligned with how academic evaluation works. Reviewers and supervisors rarely tell you exactly what to write, they identify weaknesses, missing connections, and mismatches between claims and evidence.

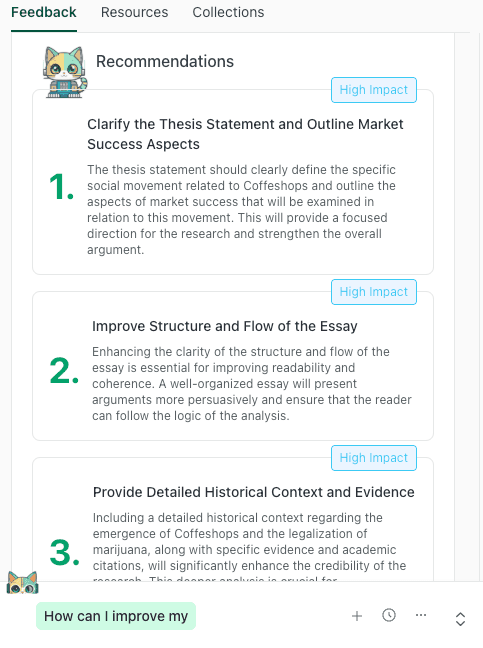

Audit, Don't Write: Rather than generating text, thesify provides high-impact recommendations that guide students to clarify their arguments, refine their structure, and strengthen their evidence through their own revision.

For a reviewer-style checklist you can apply today, see Scientific Paper Discussion Section Feedback: How to Stress-Test Your Claims.

Why this matters in a world of hallucinations and direct answers

The OECD is clear that general-purpose GenAI has inherent weaknesses that make it risky in academic contexts, including hallucinations, inconsistent outputs over time, and cultural bias embedded in training data. These issues become more damaging when the tool is used to generate content that students submit with minimal scrutiny.

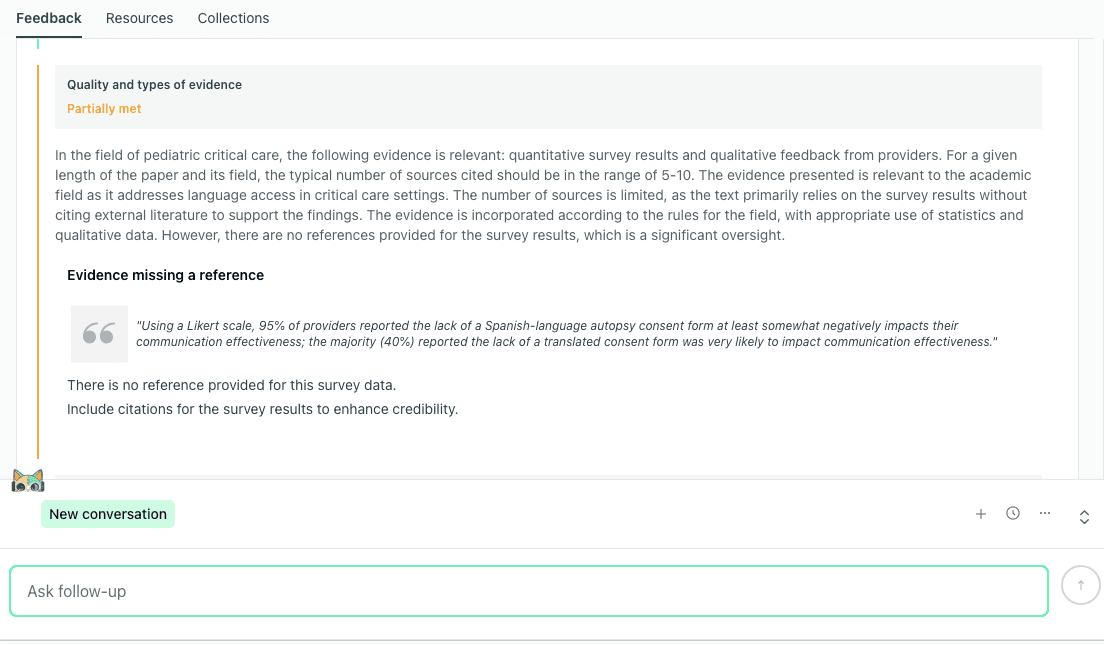

A purpose-built audit workflow changes the failure mode. When the system is designed to critique structure and reasoning, and to keep the student in an iterative loop, it is harder to “pass through” a confident-sounding but unsupported claim. The student is pushed toward verification: checking sources, tightening logic, and clarifying argument moves.

Beyond Direct Answers: thesify acts as a pedagogical auditor, identifying specific gaps in logic or missing citations to ensure students remain responsible for the academic rigor of their work.

Ultimately, thesify aligns with the OECD’s practical directive: move away from generic chatbots as default tools, and adopt systems that support the learning process through structured interaction and human judgement.

Supporting Professors: Making Formative Feedback Manageable

For many professors, the practical challenge is not just that students are using generative AI, but how to maintain rigorous standards when the time required for personalised feedback is already limited. The OECD Digital Education Outlook 2026 notes that AI can significantly improve teacher productivity, including measurable reductions in preparation time. One study cited in the report found a 31% reduction in time spent on lesson and resource planning among secondary science teachers in England.

OECD TALIS 2024 (Figure 1.4) summary of teachers’ AI use, perceived benefits (lesson planning, adapting materials), and concerns (plagiarism risk, incorrect recommendations, bias). (Image source)

Evidence from a study within the OECD (Baker et al., 2026) also suggests that AI teaching assistants can be especially valuable for less experienced instructors and tutors. In one trial, student pass rates improved more significantly for low-experience tutors using AI support than for their more experienced counterparts. This suggests a realistic role for AI in higher education: not replacing teaching expertise, but helping educators deliver consistent, high-quality support at scale.

The OECD’s TALIS 2024 results make clear that adoption is uneven and concerns are real. On average across OECD countries, roughly 36% of lower secondary teachers reported using AI in their work. Most of this activity focuses on preparation tasks, such as summarising topics and generating lesson plans.

However, teachers also report significant anxiety regarding academic integrity, algorithmic bias, and data protection. Many feel they lack the specific skills to use AI well. The takeaway for higher education is that any AI-supported workflow must preserve teacher autonomy and avoid pushing the responsibility for professional judgement onto a tool. For classroom strategies and policy language, see Teaching Ethical AI in Academic Writing: A Guide for Professors and Instructors.

AI for formative feedback: A defensible workflow

This is exactly where AI for formative feedback is most effective. In a well-designed workflow, AI helps students improve their work before submission, allowing professors to concentrate their limited time on higher-order feedback rather than basic line edits.

What is Formative Feedback?

Formative feedback is guidance provided during the learning process to help students improve before a final assessment. It focuses on what needs revision and why, rather than simply assigning a final grade.

A practical integration model for how to give faster, higher-quality feedback using thesify looks like this:

Before submission: Students run drafts through thesify to receive rubric-aligned, reviewer-style feedback on argument structure and evidence-to-claim connections. This process is essential for turning vague feedback into actionable revisions before the professor ever sees the paper. If you want to share feedback with students or co-authors, use thesify’s downloadable feedback report to export a revision-ready PDF.

Granular Writing Audits: thesify evaluates student work against specific academic criteria, identifying precisely where a draft meets requirements and where it fails to evaluate or connect key concepts, ensuring students lead the revision process.

During grading: Professors receive improved drafts and can focus their comments on deeper disciplinary issues, such as theoretical framing and methodological reasoning, rather than correcting basic logic gaps.

For consistency: Departments can align expectations by encouraging students to use the same feedback categories and learning how to apply feedback step-by-step using thesify’s Chat with Theo feature.

Conversational Coaching: Through Chat with Theo, thesify moves beyond static audits to provide interactive, Socratic guidance that helps students refine complex elements like thesis statements through critical reflection.

This approach supports workload reduction without resulting in "AI marking your paper". The professor remains the ultimate evaluator, while thesify acts as a structured coaching layer that keeps students doing the necessary thinking.

Conclusion: Using Educational AI Tools Without Cognitive Offloading

The OECD evidence clarifies the risk behind the current hype cycle. The impact of generative AI on student learning depends less on whether AI is used and more on how it is integrated into the workflow. “Fast AI” workflows, where students prompt, paste, and submit, create an illusion of mastery while reducing the cognitive effort that drives retention, reasoning, and academic development. This is cognitive offloading in practice, explaining why generic chatbots can raise short-term performance while scoring up to 17% lower on subsequent exams.

The alternative is not a blanket ban. The OECD points toward educational AI tools that support the learning process through iterative revision, Socratic questioning, and formative feedback that keeps the student as the author. When AI is designed to diagnose gaps and prompt reflection, it supports academic integrity rather than undermining it. If you want criteria for what counts as academic-grade support, see AI Tools for Academic Research: Criteria to Identify Academic-Grade Tools.

To build durable hybrid human-AI skills, you must change your workflow: draft first, then use AI to pressure-test your claims, identify missing logic, and revise with intention. For educators, this means encouraging systems that make AI literacy and digital competence guidance a priority while maintaining high standards through responsible AI use in academic writing.

Sign up for thesify free today

Stop outsourcing your thinking. Upload your draft to thesify to receive structured, reviewer-style feedback that helps you revise with intention.

Related Posts

Choosing the Right AI Tool for Academic Writing: thesify vs. ChatGPT: Learn the differences between thesify vs. ChatGPT, and how Theo (thesify’s AI assistant) differs from generic ChatGPT. thesify’s tailored feedback and ability to suggest academic resources allow writers to strengthen their essays with well-supported evidence and deeper connections to theoretical frameworks. ChatGPT, by contrast, lacks the precision and actionable guidance needed to support meaningful improvements. Find out more about ChatGPT’s limited contextual awareness compared to thesify’s feedback centered around identifying gaps and actionable guidance.

How Professors Can Effectively Integrate Generative AI in 2025: Get an overview of HEPI’s 2025 findings from a faculty viewpoint on student AI use. HEPI’s findings note that with 92% of students now using AI tools in some form, university faculty face a transformative moment. Instead of fearing AI's presence in education, professors who embrace its strengths can significantly enhance critical thinking, creativity, and meaningful engagement in their classrooms.

Teaching Ethical AI in Academic Writing: A Guide for Professors and Instructors: Faculty seeking practical advice on AI and assignments can consult thesify's comprehensive guide on teaching responsible AI use. Navigating AI in education requires a balanced approach. Professors who proactively teach responsible ai academic writing are not just preventing misconduct – they are empowering students with skills for the future. By clearly defining what ethical AI use looks like, implementing smart classroom strategies, and embracing tools like thesify that promote integrity, educators can turn a potential threat into a teaching opportunity.