How Do Professors Detect AI in 2026? Tools, Accuracy, and False Positives

How do professors detect AI in 2026? Increasingly, by combining institutional tools with process checks, citation verification, and voice consistency across assignments. Some reports suggest over 60% of higher education institutions have now implemented formal AI detection technology. Furthermore, UNESCO reports that nearly two-thirds of institutions have developed specific guidance on AI use, which now dictates how these detection tools are applied in disciplinary hearings.

This widespread adoption of AI detection tools leaves many students anxious, especially since detection tools are not infallible. Current research into false positive AI detection reveals a troubling trend: humans are often barely better than chance at identifying AI writing. Because of this "human-detection gap," institutions are leaning more heavily on algorithmic signals and metadata audits rather than gut feeling alone.

Our 2026 guide explains how professors actually detect AI, including automated signals (perplexity, burstiness, classifier behaviour), manual checks (voice shifts, drafts, citations, revision history), and the most common AI detection tools used in higher education. It also breaks down what “accuracy” means, why false positives happen, how policies differ across universities, and what to do if your work is flagged.

If you want the baseline methods first, read our 2025 guide to how professors detect AI in academic writing, then come back here for the updated 2026 evidence and tool landscape.

What Is AI Detection? Understanding Perplexity and Burstiness

Definition (for quick reference): AI detection is the use of software to estimate whether text shows patterns that are more typical of machine-generated writing than human writing. The output is a probability signal, not a definitive verdict.

To navigate the 2026 academic landscape, it helps to start with a clear expectation: AI detection is not a “truth machine.” Most tools are designed to flag risk so an instructor can look more closely, and major providers warn that results can be inaccurate and should not be used as the sole basis for penalties.

AI detection also differs from plagiarism checking. A plagiarism checker looks for text overlap with sources in a database, then reports similarity. AI detectors, by contrast, look for writing-pattern signals that correlate with how large language models tend to produce text.

The Metrics: Perplexity and Burstiness

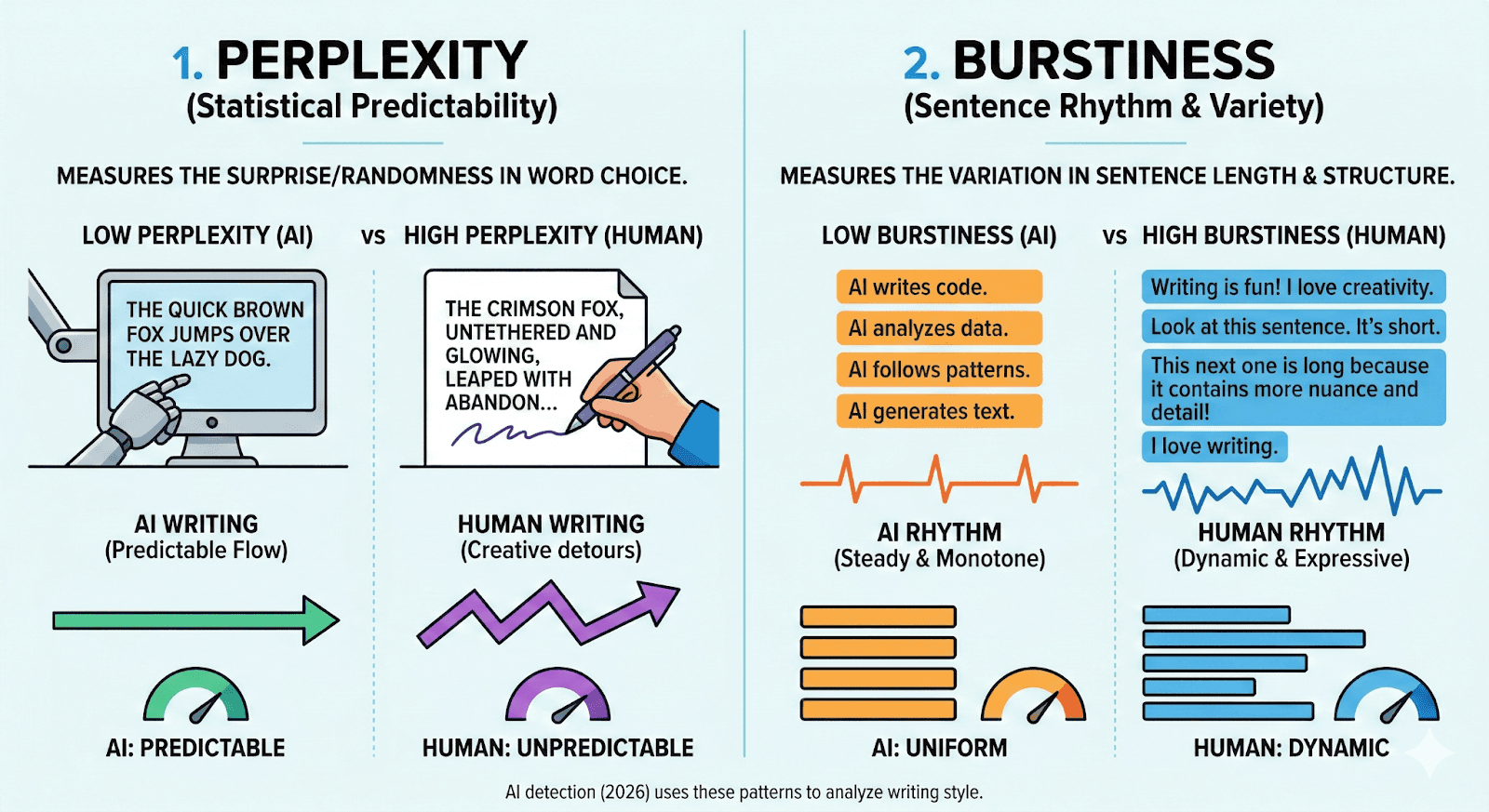

Many AI-detector explanations focus on two metrics you will see repeatedly in academic discussions of detection tools: perplexity and burstiness. In simple terms, they describe how predictable your language is and how variable your sentence rhythm is.

Perplexity measures how predictable a sequence of words is to a language model. When writing is highly predictable, perplexity tends to be lower. When writing includes more surprising or less expected choices, perplexity tends to be higher. In detector discussions, this matters because generated text often favors statistically likely next-word choices, especially in generic explanations.

Burstiness refers to variation across sentences, including sentence length and structural variety. Human writing often shifts pace naturally, mixing shorter sentences with longer ones, and changing structure as the argument develops. More uniform pacing can be one signal that prompts a closer look, although it is not a reliable signal on its own.

A 2026 comparison of AI and human linguistic signatures. AI detectors analyze low perplexity (predictable word choice) and low burstiness (monotone sentence rhythm) to identify machine-generated text.

The Critical Difference: AI Detection vs Plagiarism Checking

It is common to conflate AI detection with plagiarism detection, but they answer different questions:

Plagiarism checking: “Does this text match existing sources?” Tools compare your submission to databases of articles, websites, and past submissions, then report similarity.

AI detection: “Does this text look statistically like AI-generated writing?” Tools estimate likelihood based on patterns and then highlight sections that triggered the score.

This difference matters because a paper can be fully original (low similarity) and still be flagged by AI detection if it is unusually uniform, overly generic, or inconsistent with your prior writing. It also matters because probability-based tools produce false positives, and research has shown that some detectors disproportionately misclassify non-native English writing as AI-generated.

If you use generative AI at any stage, reduce avoidable risk by following your institution’s guidance on transparent AI disclosure and citation. Our Ethical Use Cases of AI in Academic Writing guidance offers practical examples of this approach, recommending that you err on the side of transparency when citing generative AI use.

You can also cross-check expectations against thesify’s roundup of institutional rules in Generative AI Policies at the World’s Top Universities: October 2025 Update.

How AI Detection Evolved

AI detection developed as a necessary, high-stakes response to the rapid evolution of generative models and the resulting challenge of distinguishing student work from machine output. A useful starting point is 2019, when the introduction of GPT-2 raised the first significant concerns regarding "machine-written" text as a distinct category requiring policy responses.

However, the true inflection point arrived on November 30, 2022, with the public launch of ChatGPT. In the months that followed, education stakeholders moved quickly; for instance, the first generation of AI checkers appeared just a few months after ChatGPT’s arrival.

Early detection efforts were probabilistic and inconsistent across text types, which shaped current guidance to treat scores as indicators rather than proof. OpenAI’s own AI text classifier, released in early 2023, frequently mislabeled human text as AI, serving as a reminder that these tools provide probability scores rather than definitive proof.

Between 2024 and 2026, detection evolved into a continuous game of catch-up. Detectors are regularly updated as model behaviour changes, but independent evaluations show performance can still drop under domain shifts, revision, and adversarial edits. This evolution has moved detection from ad hoc "gut feeling" checks to fully operationalized institutional workflows.

Adoption of AI detection in academia is rising, but reliability varies across detectors, domains, and revision conditions. Official efficacy rates and accuracy statistics are often elusive, and when available, they frequently originate from the AI detection companies themselves. For instance, while some high-end tools claim accuracy as high as 99%, independent research often tells a more nuanced story.

This error margin is not evenly distributed; detectors disproportionately flag non-native English speakers—a group including millions of international and ESL students—because their clear, standardized sentence structures often mimic the low "perplexity" patterns detectors are trained to identify.

Because institutional rules vary wildly on what counts as 'acceptable assistance,' it is critical to understand the specific rules governing your campus. For a global perspective on how the landscape has shifted, see our analysis of Generative AI Policies at the World’s Top Universities: October 2025 Update.

Manual vs Automated AI Detection Methods Professors Use

In practice, professors rarely rely on a single signal to decide whether AI was used. Most use a combination of automated checks (software that produces probability scores) and manual judgment (what the writing looks like in context, and how it aligns with a student’s normal work).

Automated Detection: What The Tools Actually Flag

AI detectors scan text for patterns associated with machine-generated writing, then return a likelihood score. Many systems focus on statistical features such as predictability and uniformity (often discussed in terms of perplexity and burstiness), and they may highlight specific sentences that triggered flags.

The important detail for students is that these scores are indicators, not proof. Even major vendors caution that automated results should not be the sole basis for adverse action, because accuracy varies by text length, topic, and whether the writing has been edited.

Pro Tip: Stand Out From the "AI Slop" When everyone has access to the same generative tools, submitting generic AI output no longer saves time—it actually makes your lack of effort highly visible. To learn how to make your critical thinking and original judgment stand out to professors, read our guide on Academic Writing in the Age of AI: How to Rise Above the Slop.

Manual Detection: The Red Flags Professors Look For

When professors suspect AI use, they often start with the simplest question: Does this sound like you?

That manual review typically includes checks like:

Sudden shifts in tone, formality, or vocabulary compared to your earlier work

Unusual structure or “too smooth” transitions that do not match your typical drafting habits

Generic arguments that stay high-level and avoid clear commitments

Citations that do not exist, do not support the claim, or are inconsistently formatted

Missing process evidence, such as no outline, no drafts, or a document that appears to be written in a single session

Suspicious process patterns, including unusually fast turnaround or minimal revision history

If something feels off, professors may compare the submission to earlier assignments, in-class writing, or past drafts. They may also ask you to explain key choices orally, for example why you used a specific concept, how you interpreted a finding, or where a citation came from.

Why Manual Detection Is Limited

Manual detection is not foolproof either. A 2025 study found that people distinguish AI-generated text from human writing only slightly better than chance, especially as model quality improves.

If you want to keep your writing recognisably yours while still using AI responsibly, check out our guide: Writing Excellence in the AI Era: Fostering Academic Writing Skills With Supportive Feedback.

Comparing AI Detection Tools

Universities typically use AI detection tools as screening and triage, then follow up with human review (your writing history, drafts, citations, and your ability to explain the ideas). That approach matters because even the most widely used systems warn that detection outputs are probabilistic, and misclassification can happen.

What Universities Commonly Use (and what each tool is for)

In higher education, the most common tool you will encounter is Turnitin, because it is already embedded in many institutional submission workflows. Turnitin’s own guidance is explicit that its AI writing detection “may not always be accurate” and “should not be used as the sole basis for adverse actions against a student.” A similar caution appears in university guidance, for example the University of Twente advises staff not to treat Turnitin AI detection results as proof without further investigation.

Alongside Turnitin, educators also use standalone detectors such as GPTZero, Copyleaks, Originality.ai, ZeroGPT, Sapling, and academic-positioned tools like Proofademic (availability varies by institution).

Why “Accuracy” Claims Vary So Much

If you see headline accuracy numbers online, treat them as conditional. Peer-reviewed evaluation work shows why: the RAID benchmark (ACL 2024) finds that detectors can perform well on familiar distributions, yet become unreliable under adversarial attacks, changes in sampling strategy, and unseen models or domains. This is the practical reason your professor should not treat a single score as decisive, and why process evidence (drafts, notes, revision history) matters.

Table: Quick Comparison (2026 Snapshot)

The table below offers a quick comparison of the various AI detection tools used by professors and universities, based on the RAID benchmark (ACL 2024).

Tool | Typical Use in Higher Ed | Output | Languages | Integrations | Notes on Reliability |

Turnitin | Common | % indicator + highlights | Primarily English (implementation-dependent) | LMS workflows | Not always accurate, not sole basis for action |

GPTZero | Common (varies by region) | Probability/score + highlights | Varies | Tool-based, some edu workflows | Robustness varies by text type and edits |

Copyleaks | Common (some institutions) | Score + highlights | 100+ (company-stated) | LMS integrations (company-stated) | Robustness varies by domain/attacks |

Originality.ai | Sometimes | Score + paragraph analysis | Varies | API/team features (company-stated) | Robustness varies by model/edits |

ZeroGPT | Sometimes | % score | Varies | Limited | Results vary across detectors |

Sapling | Sometimes | % likelihood + highlights | Varies | API + extensions (company-stated) | Robustness varies by text type |

Proofademic | Sometimes | Detector output (tool-based) | Varies | Varies | Evaluate cautiously, use process checks |

Student Resource: Which Tools Are Actually Safe?

If you are trying to navigate the crowded landscape of AI writing assistants, you need to know which ones protect your academic integrity. Check out our head-to-head comparisons: Choosing the Right AI Tool for Academic Writing: thesify vs. ChatGPT and Jenni AI vs. Google Gemini: Comparing AI Tools for Academic Writing and Avoiding Cheating.

AI Detector Reliability: False Positives and Bias

If you are trying to interpret an AI detector score in 2026, start with a basic point from the research literature: detectors output a probability signal, and reliability depends on the benchmark setup, the threshold used, the domain, and whether the text has been revised. A large-scale evaluation paper introducing the RAID benchmark shows that detector performance can change substantially across models, domains, and adversarial edits, including edits that resemble real student revision.

The Three Metrics You Will See in Research

Most academic evaluations describe reliability using three standard classification metrics:

Precision: when the tool flags text as AI-generated, how often that flag is correct

Recall: how much AI-generated text the tool successfully detects

Accuracy: the overall proportion of correct classifications across human and AI text

RAID-style evaluations also make the tradeoffs visible. Some detectors are tuned to keep false positives low, but tightening thresholds typically reduces recall, meaning more AI-generated passages slip through.

False Positives and the Real Risk to Students

False positives are not rare edge cases, they are part of the reliability problem that institutions need to manage. In the RAID evaluation, detector outputs can be destabilised by adversarial changes and shifts in generation settings, and performance can drop when text is edited or mixed with human writing.

Real-World Case Study: The Human Cost of False Positives

In a widely reported case by NPR, 17-year-old student Ailsa Ostovitz was wrongly accused of academic misconduct after an AI detector gave her original work a 30.76% probability score. Eventually, the teacher acknowledged the software’s error, but the case serves as a stark warning: AI detection scores are not definitive proof of cheating; they are "conversation starters".

Bias Against Non-Native English Writers

One of the best-documented limitations is bias against non-native English writing. Liang et al. (2023) find that widely used GPT detectors systematically misclassify non-native English writing as AI-generated, raising fairness concerns in educational contexts.

A 2026 follow-up reports a mean false positive rate of 61.3% for TOEFL essays written by Chinese students, compared with 5.1% for essays from US students in the same setup, and links this pattern to low-perplexity writing features that detectors tend to flag.

How to Protect Your Work If You Are Flagged

Focus on process evidence and transparency:

Keep outlines, notes, and intermediate drafts, even if messy

Draft in a tool with version history so your writing process is visible

Be ready to explain your sources and reasoning in your own words

Ask for a contextual review that considers your drafts and prior work

If you are unsure whether your AI use crosses the line, use When Does AI Use Become Plagiarism? A Student Guide to Avoiding Academic Misconduct.

AI Detector Reliability: False Positives and Bias

In 2026, university AI policies are rarely consistent across countries, institutions, and even individual courses. Some departments restrict generative AI outright, others allow limited use with clear disclosure, and many fall somewhere in between. The fastest way to avoid academic integrity issues is to stop relying on assumptions and instead check three things before you start: your course syllabus, your department guidance, and your university-wide policy.

If you want a credible baseline for how rules differ across institutions, use thesify’s tracker: Generative AI Policies at the World’s Top Universities: October 2025 Update.

Acceptable Use vs Academic Misconduct

A simple way to think about “allowed” versus “not allowed” is whether AI is supporting your thinking or replacing it. If you are unsure where the line is, start with When Does AI Use Become Plagiarism? A Student Guide to Avoiding Academic Misconduct.

Commonly acceptable AI uses (policy-dependent):

Brainstorming topics, outlines, or counterarguments

Generating “tutor-style” questions to test your understanding

Language-level support like grammar checks and clarity edits

Commonly prohibited AI uses (policy-dependent):

Submitting AI-generated prose as your own writing

“Humanising” AI text to evade detection or hide authorship

Using AI to generate sources, citations, or analysis you cannot independently justify

Failing to disclose AI use when your course requires disclosure

For practical workflows that keep you inside the rules, also see 9 Tips for Using AI for Academic Writing (without cheating) and tool-specific guidance in Choosing the Right AI Tool for Academic Writing: thesify vs. ChatGPT.

Best Practices for Defensive Writing

If you want your work to be defensible in case a detector flags it, treat your writing process like evidence:

Document your workflow: save outlines, prompts you used, and rough drafts (even messy ones).

Use version history: draft in an editor that records incremental writing and revisions.

Edit for voice and specificity: add course concepts, local context, and personal reasoning that reflects your actual learning. If you struggle with “sounding like you,” use How to Preserve Your Academic Voice While Using AI Writing Tools.

Make your process verifiable: follow the checklist in our Simple Audit Trail for AI Use.

Template: AI-Use Disclosure Statement

Place this in a footnote, appendix, or methods-style note where appropriate (and where your course expects it):

AI-Use Disclosure Statement

“I used [Tool Name] during the [brainstorming/outlining/editing] phase to [specific task]. I wrote and revised the final draft myself. All arguments, interpretations, and conclusions are my own.”

Guidance for Educators: Fair Assessment and AI-Resistant Assignments

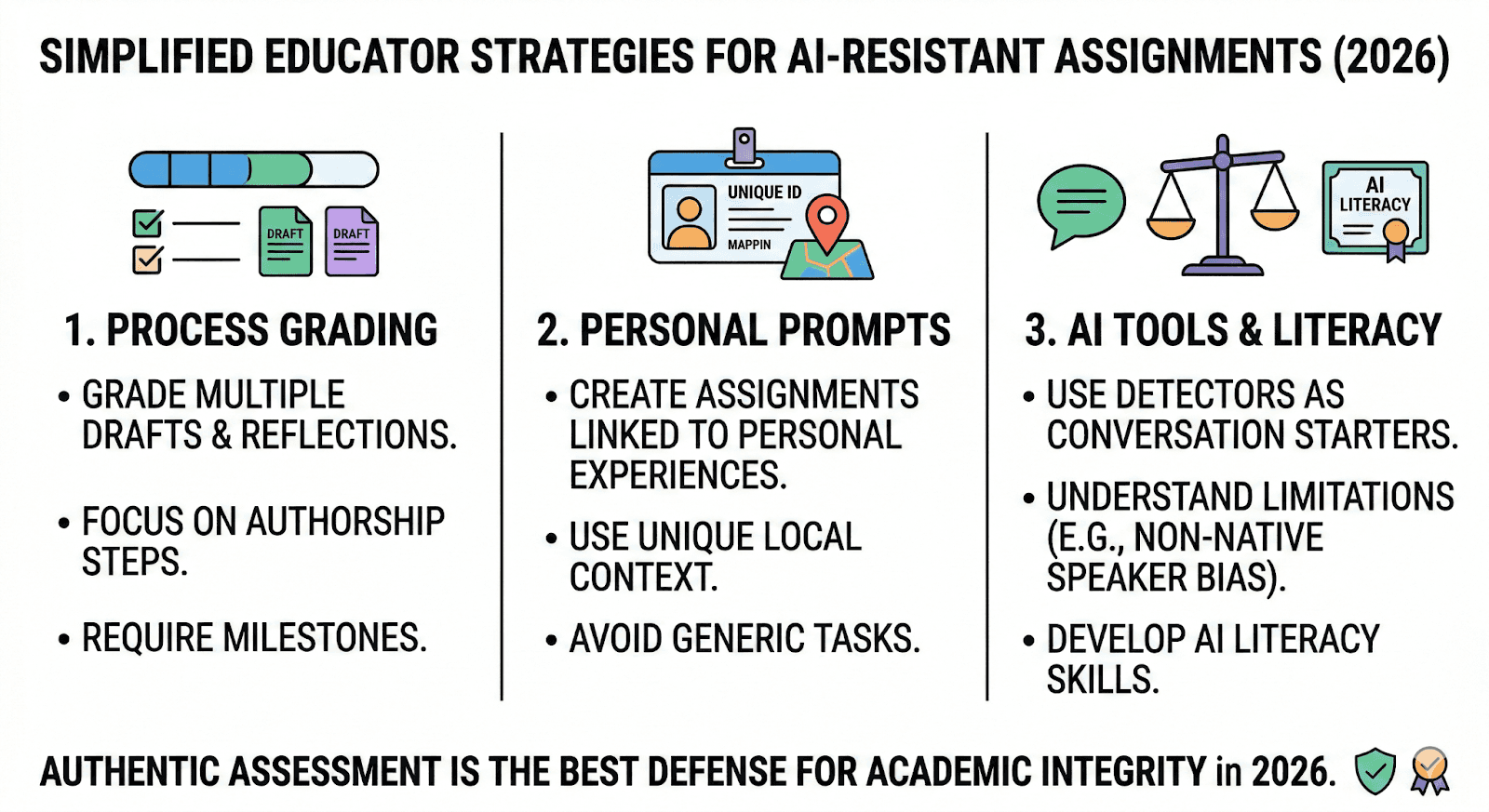

As generative AI becomes more capable, assessment strategies that rely on AI detection alone are less likely to hold up in high-stakes academic integrity cases. In 2026, the most defensible approach is to design coursework that makes student thinking visible, then use detection tools (if your institution supports them) as one input among many.

Educator Resource: Moving from Panic to Policy

Are you currently rewriting your syllabus for the new academic year? Learn how to establish a clear, responsible AI policy and integrate tools ethically in your classroom by exploring our guide: Teaching Ethical AI in Academic Writing: A Guide for Professors and Instructors. Alternatively, if you are working with graduate students, see our specific frameworks in Designing Graduate-Level AI-Inclusive Assignments.

Designing for Academic Integrity

AI-resistant assignments are rarely about “trick questions.” They are about requiring evidence of learning that a tool cannot plausibly fabricate without the student understanding the material.

Practical AI-Resistant assignments or AI-Inclusive options include:

Grade the process, not just the final paper: require milestones such as an outline, annotated bibliography, and at least one revision cycle. This makes authorship easier to evaluate and reduces disputes.

Personalize prompts to reduce generic outputs: ask students to apply concepts to a local case, a class dataset, or a specific reading set discussed in seminars.

Add a short oral component: a five-minute oral defense, recorded reflection, or seminar Q&A can verify whether the student can explain their reasoning and sources.

Create in-class writing benchmarks: a supervised baseline writing task gives instructors a fair reference point for voice, structure, and typical language proficiency.

If you want templates and policy language for course design, see thesify’s practical guide:

Supervising with AI in 2025: Policies, Templates, and Assessment Practice.

If you are redesigning assessment rather than policing it, two practical templates are No AI Assignments Built to Be AI-Resistant and Designing Graduate-Level AI-Inclusive Assignments.

Using AI Detection Tools as a Conversation Starter

If an AI detector flags an assignment, treat the score as a prompt for review, not as scorable proof. A fair follow-up is a structured conversation: ask the student to explain key paragraphs, justify sources, and describe how the draft developed. This shifts the interaction from “gotcha” enforcement to evidence-based academic integrity and, in many cases, leads to better learning outcomes.

Build AI Literacy Into Staff Practice

Faculty development matters because detectors can produce false positives and may disproportionately flag non-native English writing. Training that covers basic detector limitations, bias awareness, and documentation expectations helps departments apply policies consistently and reduce unfair outcomes.

Moving beyond software detection, educators in 2026 are focusing on authentic assessment design, including process-based grading and personalized prompts, to verify original authorship.

Looking Ahead: The Future of AI Detection

In 2026, research on LLM-generated text detection is converging on a consistent conclusion: the hardest problems are no longer “can we detect AI at all,” but whether detection holds up under real coursework conditions, including domain shifts, mixed authorship, and adversarial edits. Survey work in Computational Linguistics highlights out-of-distribution failures and adversarial attacks as persistent obstacles, even as detectors improve on clean, benchmark-style text.

The Rise of Adversarial Evasion

One of the clearest pressure points is adversarial evasion, where small changes to a document cause large drops in detector performance. The RAID benchmark paper (ACL 2024) evaluates multiple detectors across models, domains, decoding strategies, and adversarial attacks, and reports that several detectors degrade substantially under homoglyph attacks, where visually similar characters from other scripts replace Latin letters.

A dedicated study on homoglyph-based evasion shows these substitutions can circumvent multiple detectors across datasets, reinforcing that the weakness is structural (tokenization and feature sensitivity), not simply “bad student behavior.”

If you are writing or grading in this environment, the practical takeaway is simple: detector scores become less informative as soon as text is edited, merged, or manipulated, which is exactly what normal drafting and revision looks like.

From Enforcement to AI Fluency

Given these constraints, the most defensible direction is a shift toward AI fluency standards and process-based evaluation. Detection research increasingly treats hybrid, human-in-the-loop settings as the realistic target, rather than a clean “human vs AI” binary.

In coursework, that usually means more emphasis on:

draft trails (outlines, iterations, revision history)

source accountability (can the writer justify citations and reasoning)

short oral checks for high-stakes submissions (can the student explain choices)

To track how expectations vary by institution, use thesify’s policy roundup: Generative AI Policies at the World’s Top Universities: October 2025 Update. For assessment design that makes process visible, see Designing Graduate‑Level AI‑Inclusive Assignments

In 2026, the future of AI detection depends less on any single detector and more on robust assessment design. Adversarial edits like homoglyph swaps can substantially reduce detector performance, so academic integrity is increasingly supported by process evidence, disclosure, and verifiable drafting practices.

Frequently Asked Questions: AI Detection in 2026

This expanded FAQ addresses the most common concerns students and educators have regarding the current state of AI writing audits and academic integrity.

Can Turnitin detect GPT-5 or Gemini 2.0 text?

There is no reliable way to guarantee detection of a specific model or version. Detectors are trained and evaluated on particular datasets and conditions, and performance can vary across domains, revision levels, and adversarial edits. If your institution uses a detector, treat its score as a prompt for review, and rely on process evidence (draft history, sources, and your ability to explain choices) as the stronger signal.

Many institutions are placing more weight on process evidence (draft history, source accountability, and oral checks) alongside any detection score.

How accurate are AI detectors in 2026?

Reliability remains a moving target. While providers claim 99% accuracy, independent research consistently shows that accuracy drops to 60–80% once a student manually edits or "humanizes" the text. Detection is probabilistic—it's a prediction, not a certainty.

What does a “false positive” mean, and how common is it?

A false positive is when human-written text is labeled as AI-generated. Research has documented that false positives can be a meaningful risk, particularly for multilingual writers, and that detector thresholds often involve tradeoffs between catching AI text and avoiding mistaken accusations.

Do AI detectors flag non-native English writing more often?

This risk is documented in the research literature. Studies show some GPT detectors misclassify non-native English writing at higher rates than native writing, raising fairness concerns in educational assessment.

Can professors tell if I used AI without using software?

Often, yes. Professors look for "manual red flags" such as:

Hallucinated Citations: Sources or quotes that do not exist.

Abrupt Voice Shifts: A sudden jump from your usual writing style to a very clinical, "AI-sounding" tone.

Content "Drift": The essay starts strong but begins to repeat generalities without getting specific.

Can a paper be original and still be flagged for AI?

Yes. Plagiarism detection and AI detection test different things. A paper can have low similarity to existing sources and still be flagged if the writing patterns look statistically similar to machine-generated text, or if the process evidence is missing.

For the policy side of what schools consider acceptable, see thesify’s tracker: Generative AI Policies at the World’s Top Universities: October 2025 Update.

What should I do if my assignment is flagged for AI?

If your work is flagged and you didn't use AI, follow this 4-step Protocol:

Request the Report: Ask to see the specific detection score and which parts were flagged.

Share Your Draft History: Provide your Google Docs or Word version history to prove the "human pace" of your writing.

Offer a Comparison: Show the professor your previous work to demonstrate that the style is consistent with your natural voice.

Ask for a "Logic Audit": Offer to verbally explain your research process and specific phrasing choices to prove you understand the content.

Is it "cheating" to use AI for brainstorming?

This depends entirely on your university's policy. Most 2026 guidelines allow AI for brainstorming, outlining, or grammar checks, provided you disclose the use of the tool. However, submitting AI-generated prose as your own is universally considered academic misconduct. Always check your syllabus for the "Green/Yellow/Red" AI status of your specific assignment.

How can I reduce the risk of being flagged if AI is allowed?

Follow your syllabus and institutional policy, then disclose AI use if required.

Use AI for brainstorming or outlining, not for generating your final prose.

Keep an AI audit trail: outline, sources, drafts, and revision history.

Make your reasoning specific: define terms, justify claims, and connect evidence to your argument.

Verify citations manually and avoid placeholder references.

How do I disclose AI use in an assignment?

Policies differ, but a concise disclosure usually states the tool, the stage (brainstorming, outlining, language edits), and what you did yourself. For boundaries and examples, see:

When Does AI Use Become Plagiarism? A Student Guide to Avoiding Academic Misconduct.

Are there better assessment methods than AI detection tools?

Research and practice increasingly point toward process-based grading, oral components, and assignment design that makes thinking visible, especially because detector performance can degrade under real-world conditions and adversarial edits.

For more assignment redesign ideas, start here: No AI Assignments Built to Be AI-Resistant and Designing Graduate‑Level AI‑Inclusive Assignments.

Conclusion: How Professors Detect AI in 2026

In 2026, professor AI detection is rarely a single-tool decision. Instructors combine automated signals with human review, looking at pattern-based indicators, citation quality, and whether the writing aligns with your prior work and drafting process. Research on LLM-generated text detection also shows why reliability varies across domains and editing conditions, so scores should be treated as prompts for review rather than definitive proof.

The safest approach is transparency. If your course permits AI, use it for brainstorming, outlining, and clarification, then do the argumentation and interpretation yourself. Keep drafts and revision history, and be prepared to explain key choices in your own words. If you are unsure about what is allowed, ask early. A short conversation with your professor can prevent misunderstandings and protect your academic standing.

Write with integrity, not shortcuts

If you want structured feedback on your arguments, clarity, and citations—without an AI generating the text for you, sign up for thesify free today.

Related Resources

How Professors Detect AI in Academic Writing (And How to Use AI Responsibly): AI is changing education, but it must be used responsibly. Professors can and do detect AI-generated content, and universities are implementing stricter policies. AI detection tools scan writing for patterns, probability scores, and unnatural phrasing to determine the likelihood of AI involvement. Using AI as a tool rather than a shortcut ensures that students remain ethical while still benefiting from technological advancements. Learn more about AI detection tools, why using AI unethically is not worth the risk, and how to use AI in a way your professor will approve of.

Generative AI Policies at the World’s Top Universities: October 2025 Update: Explore the updated October 2025 edition of thesify’s guide to generative AI policies at the world’s top universities. See new rankings, rules and tips. Find out why universities care about generative AI, how to navigate university AI policies (best practices and student tips), and FAQs: common questions about university AI policies. Learn more about academic integrity, skill development, data privacy, reputation concerns, and university AI policy rationale.

When Does AI Use Become Plagiarism? A Student Guide to Avoiding Academic Misconduct:Many students wonder: Is paraphrasing AI plagiarism? AI-powered paraphrasing tools, such as QuillBot or Wordtune, can reword existing content to avoid direct copying. However, if an AI tool rewrites a passage from a source without properly citing it, this is still plagiarism. Even if the words are different, the ideas and structure remain the same. Some universities even classify AI-generated submissions as contract cheating, the same category as hiring someone to write an essay for you. Find out more about AI use and plagiarism and staying on the right side of